Locality-aware Attention Network with Discriminative Dynamics Learning for Weakly Supervised Anomaly Detection

Paper and Code

Aug 11, 2022

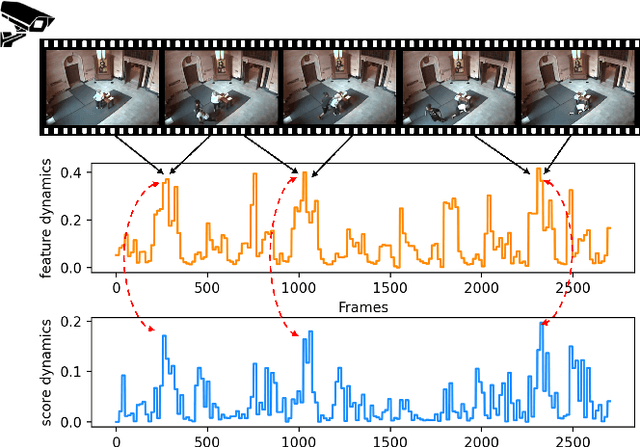

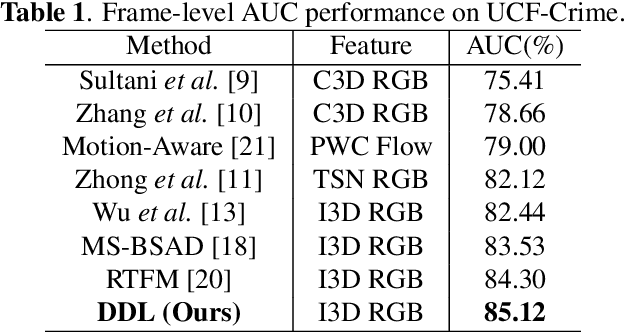

Video anomaly detection is recently formulated as a multiple instance learning task under weak supervision, in which each video is treated as a bag of snippets to be determined whether contains anomalies. Previous efforts mainly focus on the discrimination of the snippet itself without modeling the temporal dynamics, which refers to the variation of adjacent snippets. Therefore, we propose a Discriminative Dynamics Learning (DDL) method with two objective functions, i.e., dynamics ranking loss and dynamics alignment loss. The former aims to enlarge the score dynamics gap between positive and negative bags while the latter performs temporal alignment of the feature dynamics and score dynamics within the bag. Moreover, a Locality-aware Attention Network (LA-Net) is constructed to capture global correlations and re-calibrate the location preference across snippets, followed by a multilayer perceptron with causal convolution to obtain anomaly scores. Experimental results show that our method achieves significant improvements on two challenging benchmarks, i.e., UCF-Crime and XD-Violence.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge