Linear interpolation gives better gradients than Gaussian smoothing in derivative-free optimization

Paper and Code

Jun 02, 2019

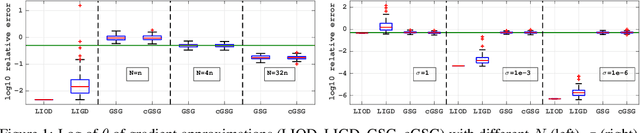

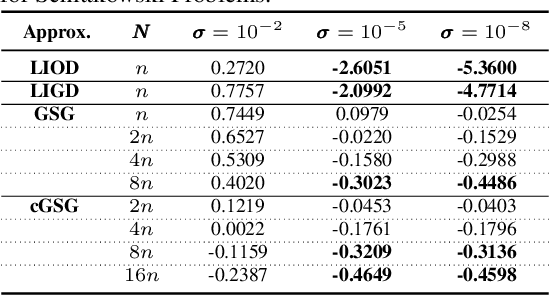

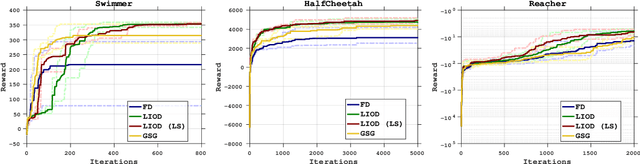

In this paper, we consider derivative free optimization problems, where the objective function is smooth but is computed with some amount of noise, the function evaluations are expensive and no derivative information is available. We are motivated by policy optimization problems in reinforcement learning that have recently become popular [Choromaski et al. 2018; Fazel et al. 2018; Salimans et al. 2016], and that can be formulated as derivative free optimization problems with the aforementioned characteristics. In each of these works some approximation of the gradient is constructed and a (stochastic) gradient method is applied. In [Salimans et al. 2016] the gradient information is aggregated along Gaussian directions, while in [Choromaski et al. 2018] it is computed along orthogonal direction. We provide a convergence rate analysis for a first-order line search method, similar to the ones used in the literature, and derive the conditions on the gradient approximations that ensure this convergence. We then demonstrate via rigorous analysis of the variance and by numerical comparisons on reinforcement learning tasks that the Gaussian sampling method used in [Salimans et al. 2016] is significantly inferior to the orthogonal sampling used in [Choromaski et al. 2018] as well as more general interpolation methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge