Lexicase selection in Learning Classifier Systems

Paper and Code

Jul 10, 2019

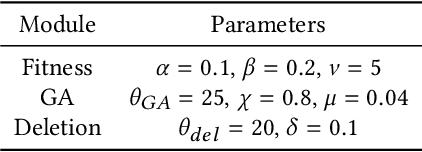

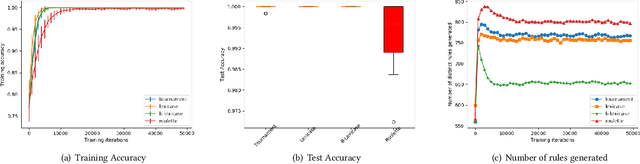

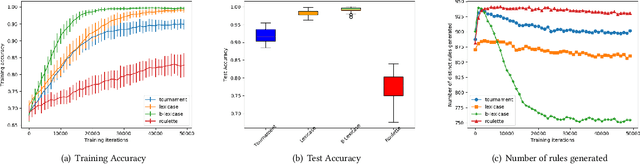

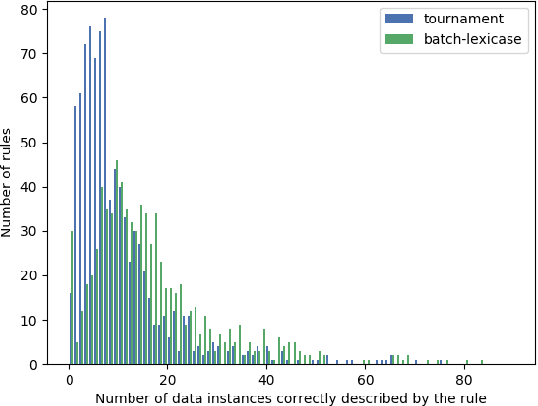

The lexicase parent selection method selects parents by considering performance on individual data points in random order instead of using a fitness function based on an aggregated data accuracy. While the method has demonstrated promise in genetic programming and more recently in genetic algorithms, its applications in other forms of evolutionary machine learning have not been explored. In this paper, we investigate the use of lexicase parent selection in Learning Classifier Systems (LCS) and study its effect on classification problems in a supervised setting. We further introduce a new variant of lexicase selection, called batch-lexicase selection, which allows for the tuning of selection pressure. We compare the two lexicase selection methods with tournament and fitness proportionate selection methods on binary classification problems. We show that batch-lexicase selection results in the creation of more generic rules which is favorable for generalization on future data. We further show that batch-lexicase selection results in better generalization in situations of partial or missing data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge