Learning to Broadcast for Ultra-Reliable Communication with Differential Quality of Service via the Conditional Value at Risk

Paper and Code

Dec 03, 2021

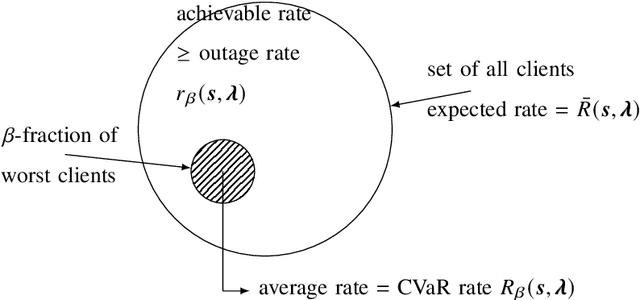

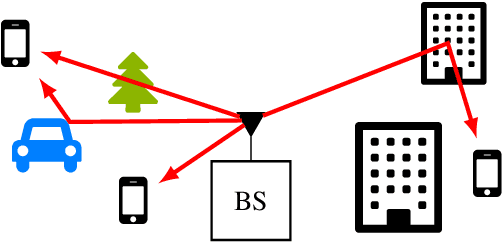

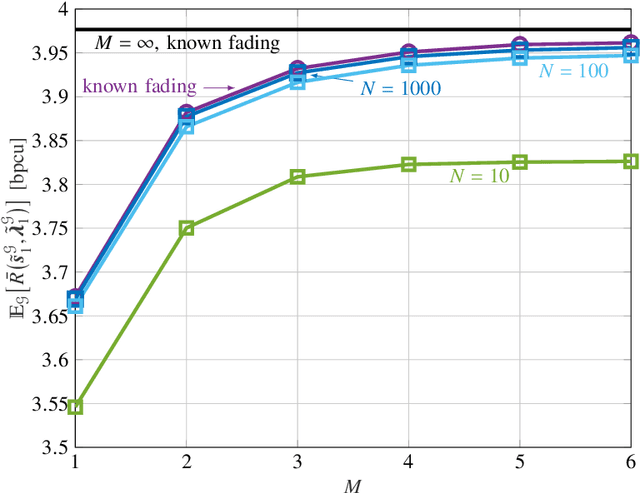

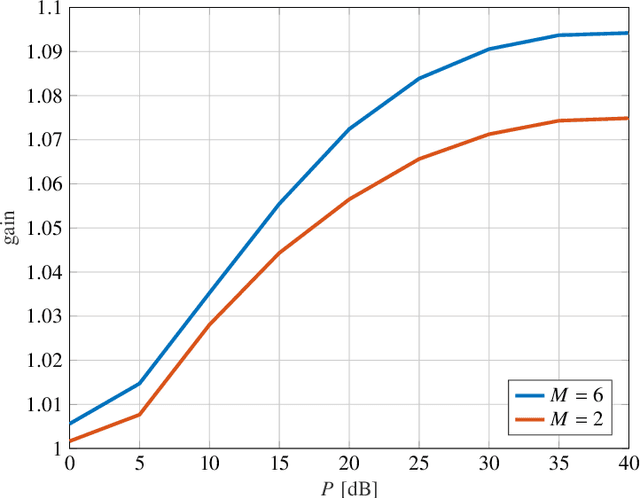

Broadcast/multicast communication systems are typically designed to optimize the outage rate criterion, which neglects the performance of the fraction of clients with the worst channel conditions. Targeting ultra-reliable communication scenarios, this paper takes a complementary approach by introducing the conditional value-at-risk (CVaR) rate as the expected rate of a worst-case fraction of clients. To support differential quality-of-service (QoS) levels in this class of clients, layered division multiplexing (LDM) is applied, which enables decoding at different rates. Focusing on a practical scenario in which the transmitter does not know the fading distribution, layer allocation is optimized based on a dataset sampled during deployment. The optimality gap caused by the availability of limited data is bounded via a generalization analysis, and the sample complexity is shown to increase as the designated fraction of worst-case clients decreases. Considering this theoretical result, meta-learning is introduced as a means to reduce sample complexity by leveraging data from previous deployments. Numerical experiments demonstrate that LDM improves spectral efficiency even for small datasets; that, for sufficiently large datasets, the proposed mirror-descent-based layer optimization scheme achieves a CVaR rate close to that achieved when the transmitter knows the fading distribution; and that meta-learning can significantly reduce data requirements.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge