Learning Style Compatibility for Furniture

Paper and Code

Dec 09, 2018

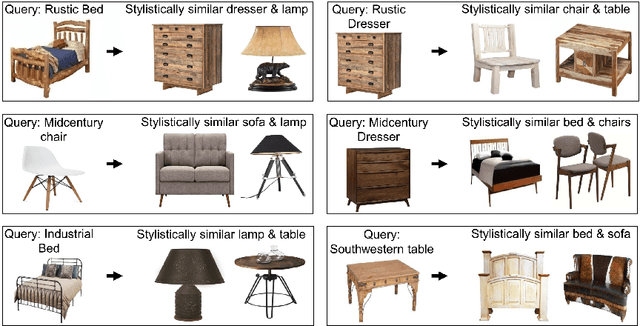

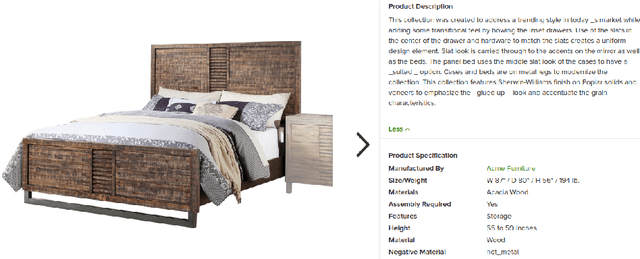

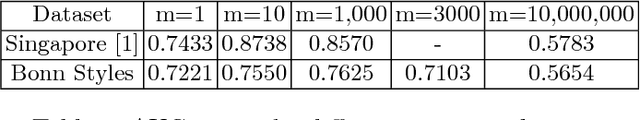

When judging style, a key question that often arises is whether or not a pair of objects are compatible with each other. In this paper we investigate how Siamese networks can be used efficiently for assessing the style compatibility between images of furniture items. We show that the middle layers of pretrained CNNs can capture essential information about furniture style, which allows for efficient applications of such networks for this task. We also use a joint image-text embedding method that allows for the querying of stylistically compatible furniture items, along with additional attribute constraints based on text. To evaluate our methods, we collect and present a large scale dataset of images of furniture of different style categories accompanied by text attributes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge