Learning Self-Prior for Mesh Inpainting Using Self-Supervised Graph Convolutional Networks

Paper and Code

May 01, 2023

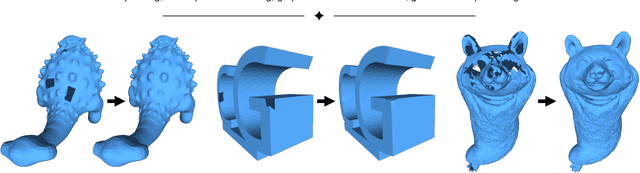

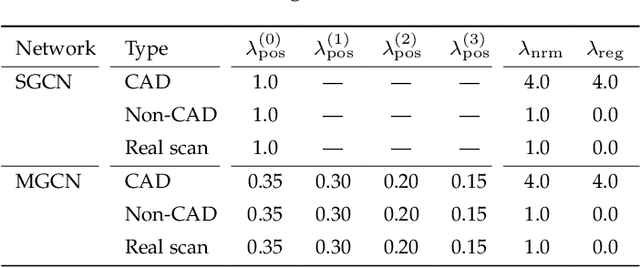

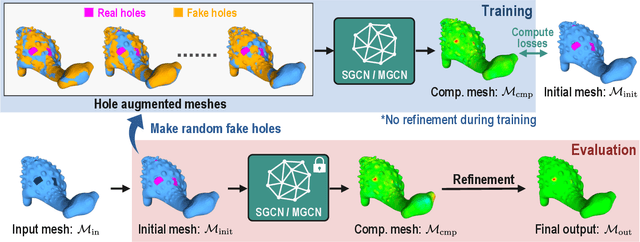

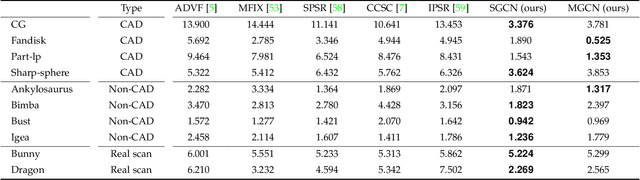

This study presents a self-prior-based mesh inpainting framework that requires only an incomplete mesh as input, without the need for any training datasets. Additionally, our method maintains the polygonal mesh format throughout the inpainting process without converting the shape format to an intermediate, such as a voxel grid, a point cloud, or an implicit function, which are typically considered easier for deep neural networks to process. To achieve this goal, we introduce two graph convolutional networks (GCNs): single-resolution GCN (SGCN) and multi-resolution GCN (MGCN), both trained in a self-supervised manner. Our approach refines a watertight mesh obtained from the initial hole filling to generate a completed output mesh. Specifically, we train the GCNs to deform an oversmoothed version of the input mesh into the expected completed shape. To supervise the GCNs for accurate vertex displacements, despite the unknown correct displacements at real holes, we utilize multiple sets of meshes with several connected regions marked as fake holes. The correct displacements are known for vertices in these fake holes, enabling network training with loss functions that assess the accuracy of displacement vectors estimated by the GCNs. We demonstrate that our method outperforms traditional dataset-independent approaches and exhibits greater robustness compared to other deep-learning-based methods for shapes that less frequently appear in shape datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge