Learning Relative Interactions through Imitation

Paper and Code

Sep 24, 2021

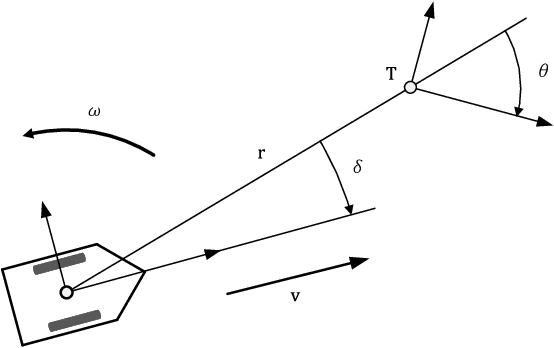

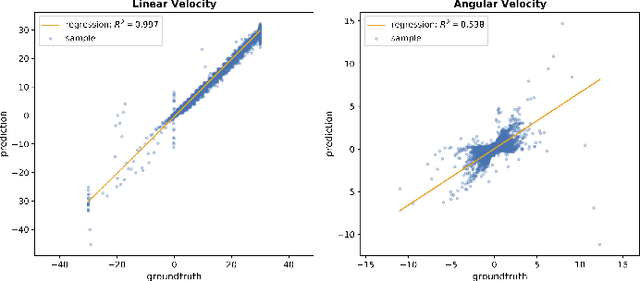

In this project we trained a neural network to perform specific interactions between a robot and objects in the environment, through imitation learning. In particular, we tackle the task of moving the robot to a fixed pose with respect to a certain object and later extend our method to handle any arbitrary pose around this object. We show that a simple network, with relatively little training data, is able to reach very good performance on the fixed-pose task, while more work is needed to perform the arbitrary-pose task satisfactorily. We also explore the effect of ambiguities in the sensor readings, in particular caused by symmetries in the target object, on the behaviour of the learned controller.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge