Learning PSD-valued functions using kernel sums-of-squares

Paper and Code

Nov 22, 2021

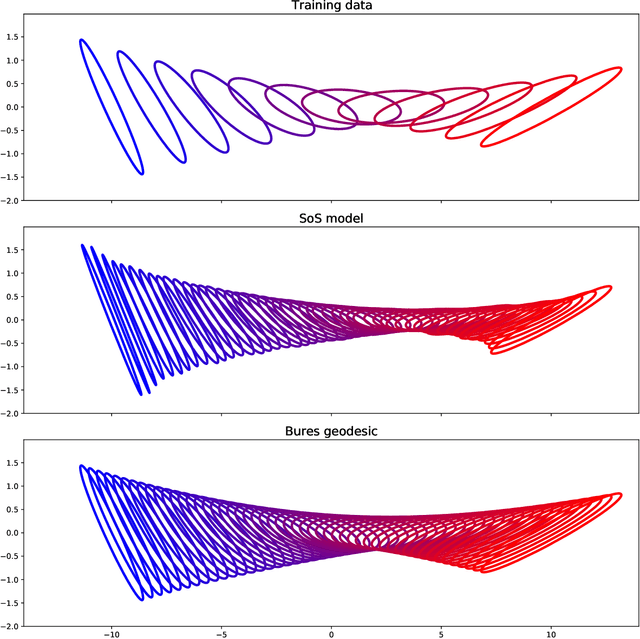

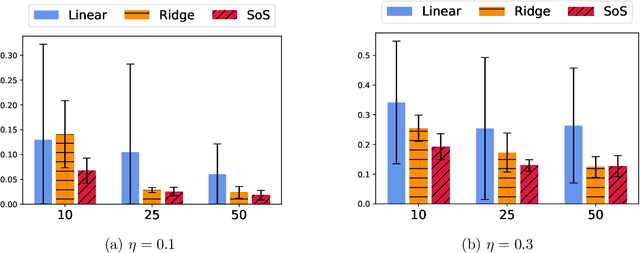

Shape constraints such as positive semi-definiteness (PSD) for matrices or convexity for functions play a central role in many applications in machine learning and sciences, including metric learning, optimal transport, and economics. Yet, very few function models exist that enforce PSD-ness or convexity with good empirical performance and theoretical guarantees. In this paper, we introduce a kernel sum-of-squares model for functions that take values in the PSD cone, which extends kernel sums-of-squares models that were recently proposed to encode non-negative scalar functions. We provide a representer theorem for this class of PSD functions, show that it constitutes a universal approximator of PSD functions, and derive eigenvalue bounds in the case of subsampled equality constraints. We then apply our results to modeling convex functions, by enforcing a kernel sum-of-squares representation of their Hessian, and show that any smooth and strongly convex function may be thus represented. Finally, we illustrate our methods on a PSD matrix-valued regression task, and on scalar-valued convex regression.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge