Learning Mixtures of Plackett-Luce Models with Features from Top-$l$ Orders

Paper and Code

Jun 06, 2020

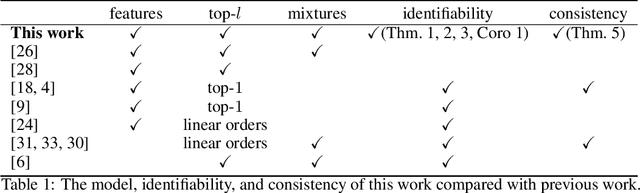

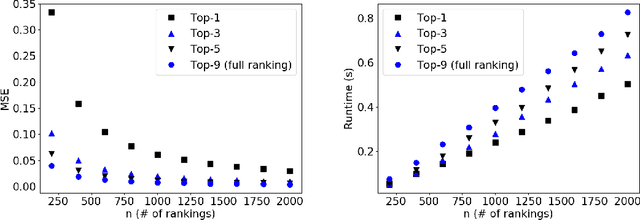

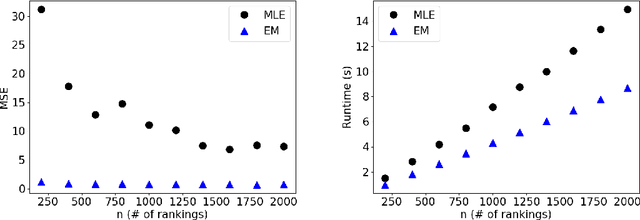

Plackett-Luce model (PL) is one of the most popular models for preference learning. In this paper, we consider PL with features and its mixture models, where each alternative has a vector of features, possibly different across agents. Such models significantly generalize the standard PL, but are not as well investigated in the literature. We extend mixtures of PLs with features to models that generate top-$l$ and characterize their identifiability. We further prove that when PL with features is identifiable, its MLE is consistent with a strictly concave objective function under mild assumptions. Our experiments on synthetic data demonstrate the effectiveness of MLE on PL with features with tradeoffs between statistical efficiency and computational efficiency when $l$ takes different values. For mixtures of PL with features, we show that an EM algorithm outperforms MLE in MSE and runtime.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge