Learning Intra-Batch Connections for Deep Metric Learning

Paper and Code

Feb 15, 2021

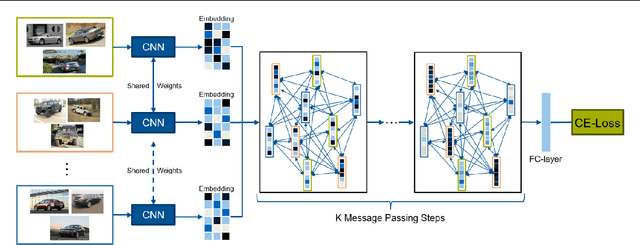

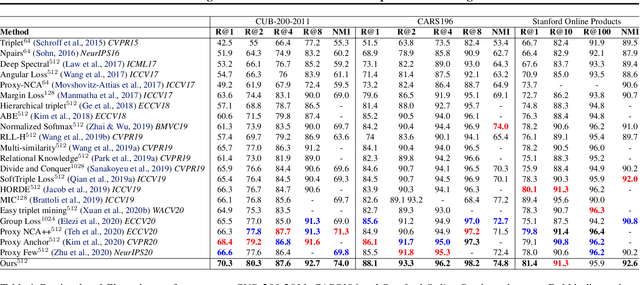

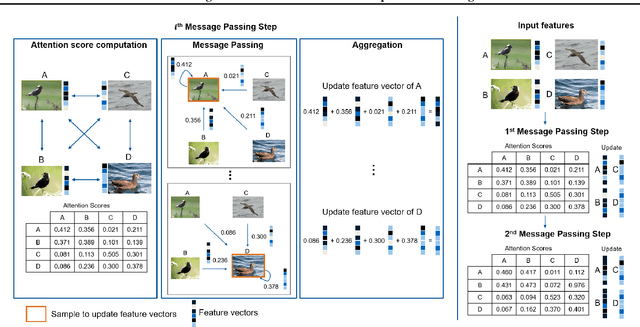

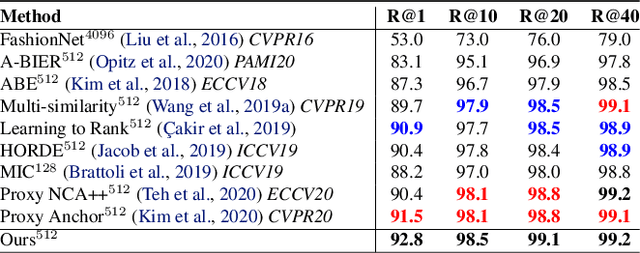

The goal of metric learning is to learn a function that maps samples to a lower-dimensional space where similar samples lie closer than dissimilar ones. In the case of deep metric learning, the mapping is performed by training a neural network. Most approaches rely on losses that only take the relations between pairs or triplets of samples into account, which either belong to the same class or to two different classes. However, these approaches do not explore the embedding space in its entirety. To this end, we propose an approach based on message passing networks that takes into account all the relations in a mini-batch. We refine embedding vectors by exchanging messages among all samples in a given batch allowing the training process to be aware of the overall structure. Since not all samples are equally important to predict a decision boundary, we use dot-product self-attention during message passing to allow samples to weight the importance of each neighbor accordingly. We achieve state-of-the-art results on clustering and image retrieval on the CUB-200-2011, Cars196, Stanford Online Products, and In-Shop Clothes datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge