Learning General Optimal Policies with Graph Neural Networks: Expressive Power, Transparency, and Limits

Paper and Code

Sep 21, 2021

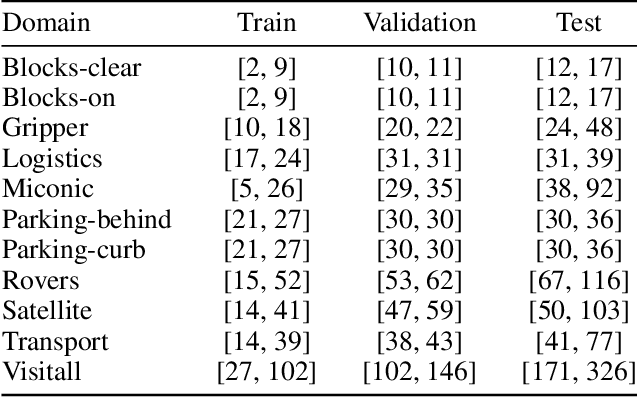

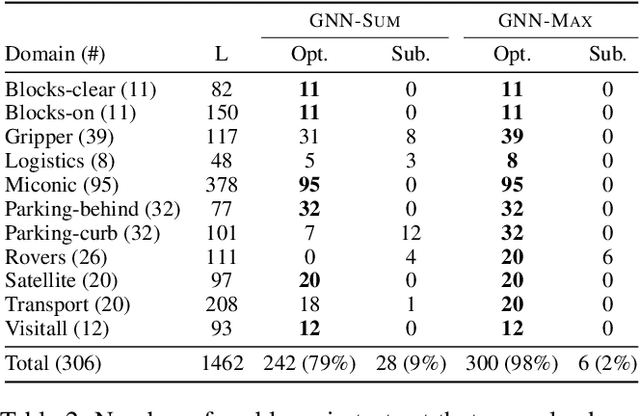

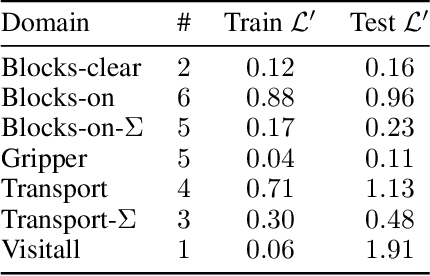

It has been recently shown that general policies for many classical planning domains can be expressed and learned in terms of a pool of features defined from the domain predicates using a description logic grammar. At the same time, most description logics correspond to a fragment of $k$-variable counting logic ($C_k$) for $k=2$, that has been shown to provide a tight characterization of the expressive power of graph neural networks. In this work, we make use of these results to understand the power and limits of using graph neural networks (GNNs) for learning optimal general policies over a number of tractable planning domains where such policies are known to exist. For this, we train a simple GNN in a supervised manner to approximate the optimal value function $V^{*}(s)$ of a number of sample states $s$. As predicted by the theory, it is observed that general optimal policies are obtained in domains where general optimal value functions can be defined with $C_2$ features but not in those requiring more expressive $C_3$ features. In addition, it is observed that the features learned are in close correspondence with the features needed to express $V^{*}$ in closed form. The theory and the analysis of the domains let us understand the features that are actually learned as well as those that cannot be learned in this way, and let us move in a principled manner from a combinatorial optimization approach to learning general policies to a potentially, more robust and scalable approach based on deep learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge