Learning by Cheating : An End-to-End Zero Shot Framework for Autonomous Drone Navigation

Paper and Code

Nov 11, 2021

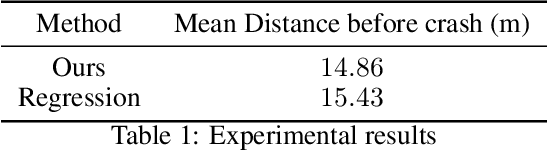

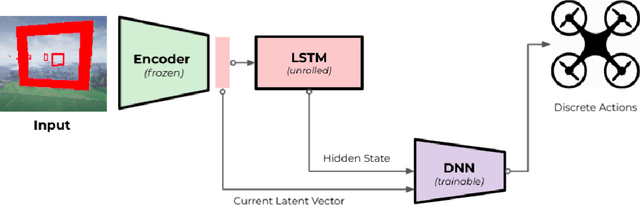

This paper proposes a novel framework for autonomous drone navigation through a cluttered environment. Control policies are learnt in a low-level environment during training and are applied to a complex environment during inference. The controller learnt in the training environment is tricked into believing that the robot is still in the training environment when it is actually navigating in a more complex environment. The framework presented in this paper can be adapted to reuse simple policies in more complex tasks. We also show that the framework can be used as an interpretation tool for reinforcement learning algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge