Learning a low-rank shared dictionary for object classification

Paper and Code

May 18, 2016

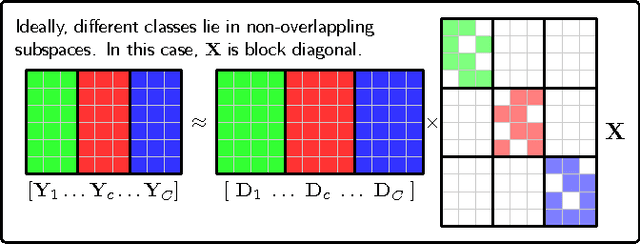

Despite the fact that different objects possess distinct class-specific features, they also usually share common patterns. Inspired by this observation, we propose a novel method to explicitly and simultaneously learn a set of common patterns as well as class-specific features for classification. Our dictionary learning framework is hence characterized by both a shared dictionary and particular (class-specific) dictionaries. For the shared dictionary, we enforce a low-rank constraint, i.e. claim that its spanning subspace should have low dimension and the coefficients corresponding to this dictionary should be similar. For the particular dictionaries, we impose on them the well-known constraints stated in the Fisher discrimination dictionary learning (FDDL). Further, we propose a new fast and accurate algorithm to solve the sparse coding problems in the learning step, accelerating its convergence. The said algorithm could also be applied to FDDL and its extensions. Experimental results on widely used image databases establish the advantages of our method over state-of-the-art dictionary learning methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge