Learnable Exposure Fusion for Dynamic Scenes

Paper and Code

Apr 04, 2018

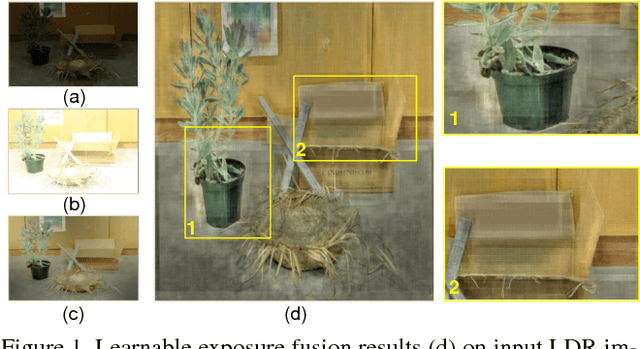

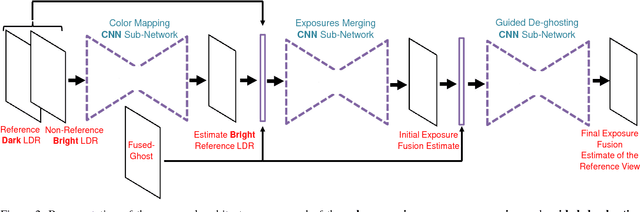

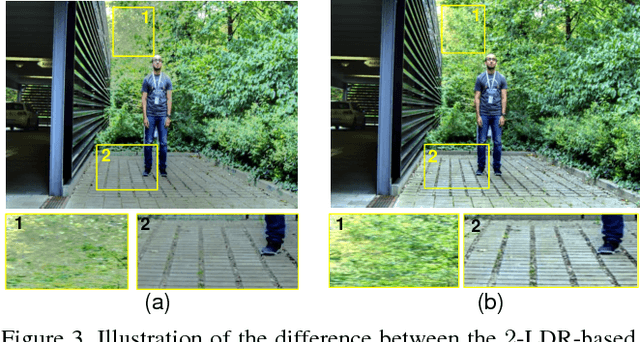

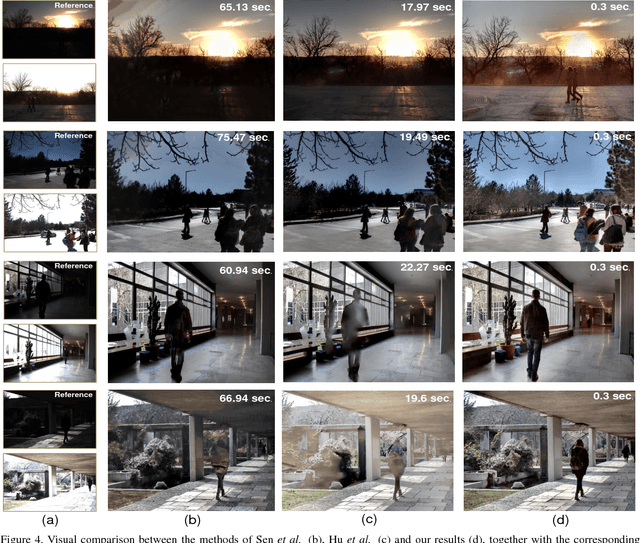

In this paper, we focus on Exposure Fusion (EF) [ExposFusi2] for dynamic scenes. The task is to fuse multiple images obtained by exposure bracketing to create an image which comprises a high level of details. Typically, such images are not possible to obtain directly from a camera due to hardware limitations, e.g., a limited dynamic range of the sensor. A major problem of such tasks is that the images may not be spatially aligned due to scene motion or camera motion. It is known that the required alignment by image registration problems is ill-posed. In this case, the images to be aligned vary in their intensity range, which makes the problem even more difficult. To address the mentioned problems, we propose an end-to-end \emph{Convolutional Neural Network} (CNN) based approach to learn to estimate exposure fusion from $2$ and $3$ Low Dynamic Range (LDR) images depicting different scene contents. To the best of our knowledge, no efficient and robust CNN-based end-to-end approach can be found in the literature for this kind of problem. The idea is to create a dataset with perfectly aligned LDR images to obtain ground-truth exposure fusion images. At the same time, we obtain additional LDR images with some motion, having the same exposure fusion ground-truth as the perfectly aligned LDR images. This way, we can train an end-to-end CNN having misaligned LDR input images, but with a proper ground truth exposure fusion image. We propose a specific CNN-architecture to solve this problem. In various experiments, we show that the proposed approach yields excellent results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge