Latency-Memory Optimized Splitting of Convolution Neural Networks for Resource Constrained Edge Devices

Paper and Code

Jul 19, 2021

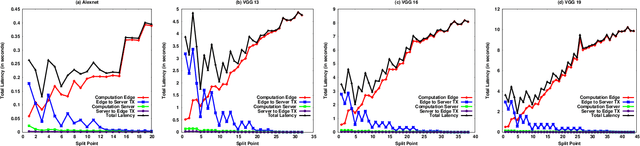

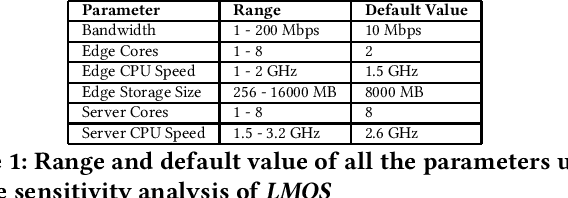

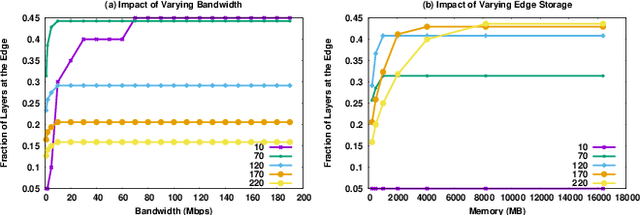

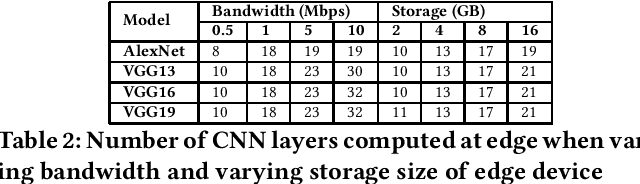

With the increasing reliance of users on smart devices, bringing essential computation at the edge has become a crucial requirement for any type of business. Many such computations utilize Convolution Neural Networks (CNNs) to perform AI tasks, having high resource and computation requirements, that are infeasible for edge devices. Splitting the CNN architecture to perform part of the computation on edge and remaining on the cloud is an area of research that has seen increasing interest in the field. In this paper, we assert that running CNNs between an edge device and the cloud is synonymous to solving a resource-constrained optimization problem that minimizes the latency and maximizes resource utilization at the edge. We formulate a multi-objective optimization problem and propose the LMOS algorithm to achieve a Pareto efficient solution. Experiments done on real-world edge devices show that, LMOS ensures feasible execution of different CNN models at the edge and also improves upon existing state-of-the-art approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge