L1-norm Kernel PCA

Paper and Code

Sep 28, 2017

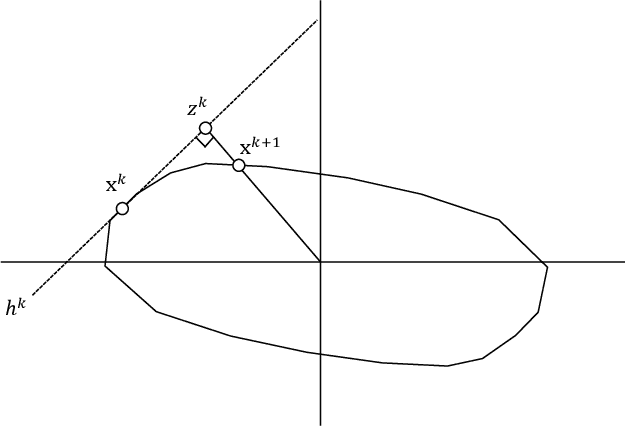

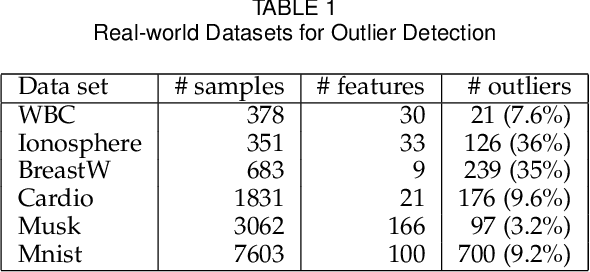

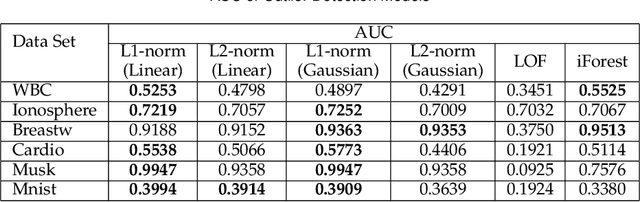

We present the first model and algorithm for L1-norm kernel PCA. While L2-norm kernel PCA has been widely studied, there has been no work on L1-norm kernel PCA. For this non-convex and non-smooth problem, we offer geometric understandings through reformulations and present an efficient algorithm where the kernel trick is applicable. To attest the efficiency of the algorithm, we provide a convergence analysis including linear rate of convergence. Moreover, we prove that the output of our algorithm is a local optimal solution to the L1-norm kernel PCA problem. We also numerically show its robustness when extracting principal components in the presence of influential outliers, as well as its runtime comparability to L2-norm kernel PCA. Lastly, we introduce its application to outlier detection and show that the L1-norm kernel PCA based model outperforms especially for high dimensional data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge