Knowledge Discovery from Layered Neural Networks based on Non-negative Task Decomposition

Paper and Code

May 21, 2018

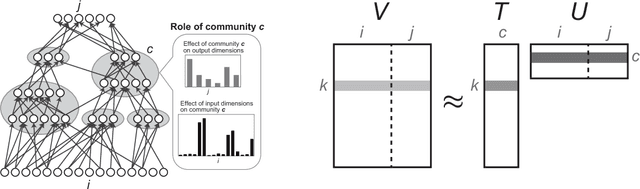

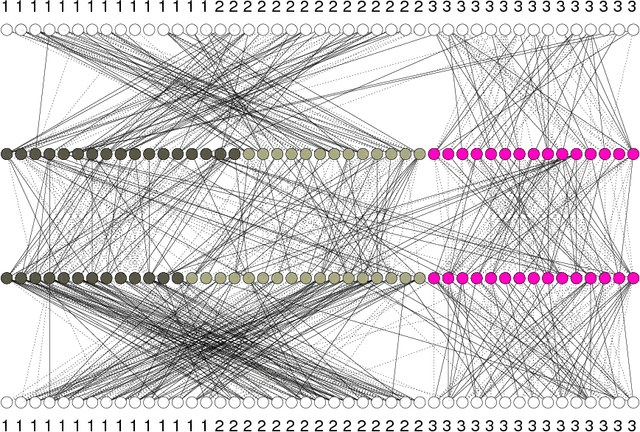

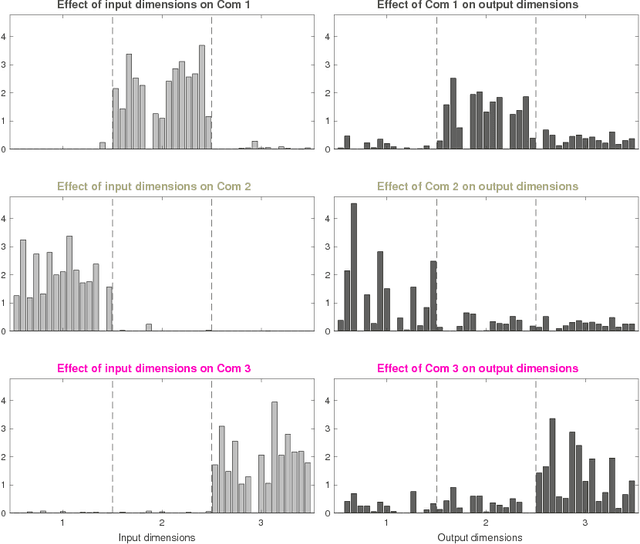

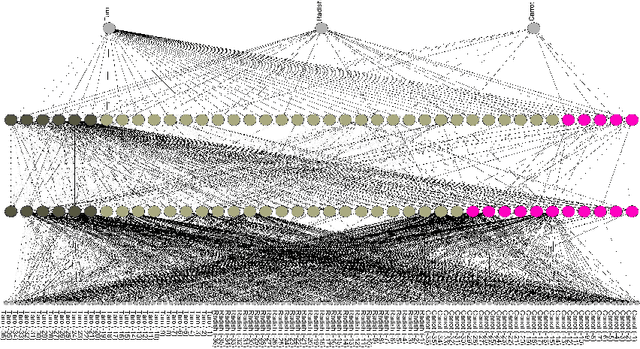

Interpretability has become an important issue in the machine learning field, along with the success of layered neural networks in various practical tasks. Since a trained layered neural network consists of a complex nonlinear relationship between large number of parameters, we failed to understand how they could achieve input-output mappings with a given data set. In this paper, we propose the non-negative task decomposition method, which applies non-negative matrix factorization to a trained layered neural network. This enables us to decompose the inference mechanism of a trained layered neural network into multiple principal tasks of input-output mapping, and reveal the roles of hidden units in terms of their contribution to each principal task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge