iUNets: Fully invertible U-Nets with Learnable Up- and Downsampling

Paper and Code

Jun 12, 2020

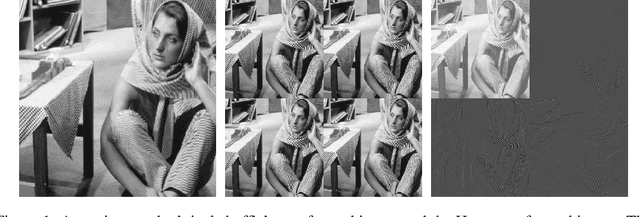

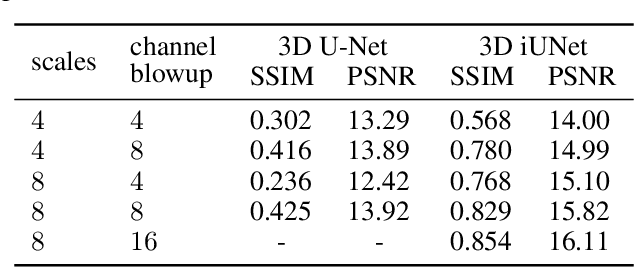

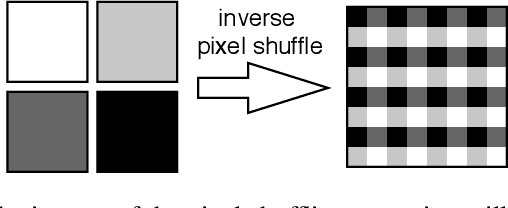

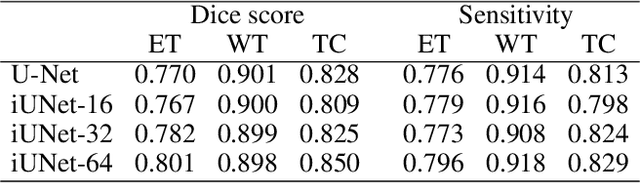

U-Nets have been established as a standard architecture for image-to-image learning problems such as segmentation and inverse problems in imaging. For large-scale data, as it for example appears in 3D medical imaging, the U-Net however has prohibitive memory requirements. Here, we present a new fully-invertible U-Net-based architecture called the iUNet, which employs novel learnable and invertible up- and downsampling operations, thereby making the use of memory-efficient backpropagation possible. This allows us to train deeper and larger networks in practice, under the same GPU memory restrictions. Due to its invertibility, the iUNet can furthermore be used for constructing normalizing flows.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge