Is Transfer Learning Necessary for Protein Landscape Prediction?

Paper and Code

Oct 31, 2020

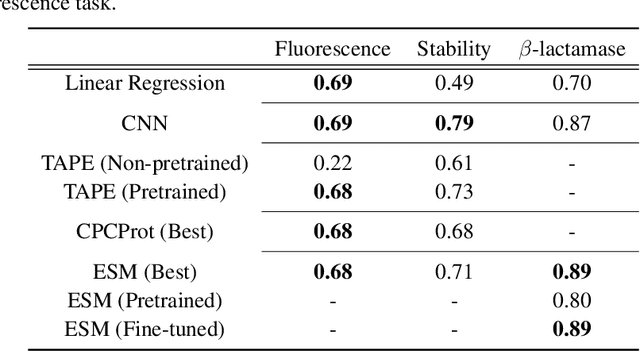

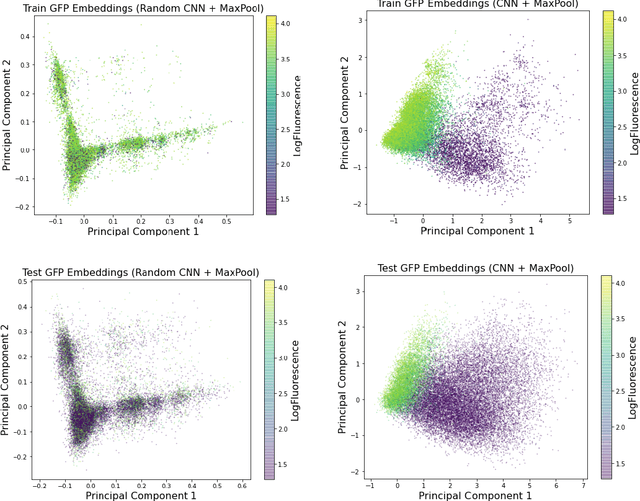

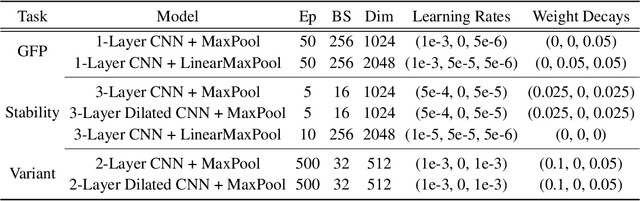

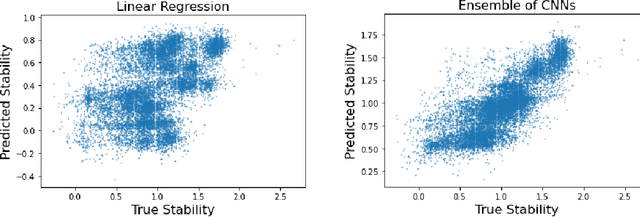

Recently, there has been great interest in learning how to best represent proteins, specifically with fixed-length embeddings. Deep learning has become a popular tool for protein representation learning as a model's hidden layers produce potentially useful vector embeddings. TAPE introduced a number of benchmark tasks and showed that semi-supervised learning, via pretraining language models on a large protein corpus, improved performance on downstream tasks. Two of the tasks (fluorescence prediction and stability prediction) involve learning fitness landscapes. In this paper, we show that CNN models trained solely using supervised learning both compete with and sometimes outperform the best models from TAPE that leverage expensive pretraining on large protein datasets. These CNN models are sufficiently simple and small that they can be trained using a Google Colab notebook. We also find for the fluorescence task that linear regression outperforms our models and the TAPE models. The benchmarking tasks proposed by TAPE are excellent measures of a model's ability to predict protein function and should be used going forward. However, we believe it is important to add baselines from simple models to put the performance of the semi-supervised models that have been reported so far into perspective.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge