Is calibration a fairness requirement? An argument from the point of view of moral philosophy and decision theory

Paper and Code

May 23, 2022

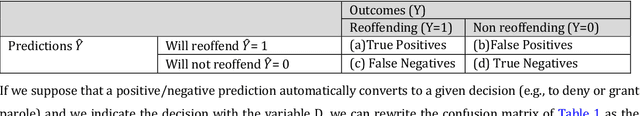

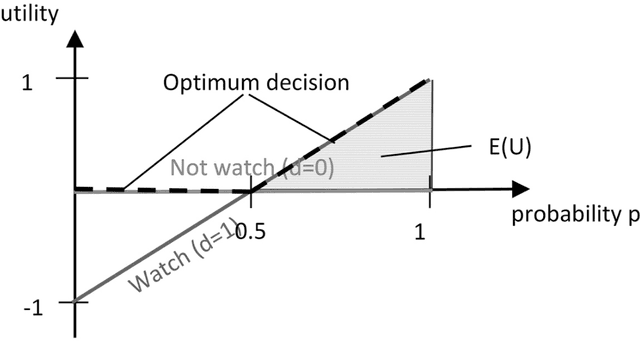

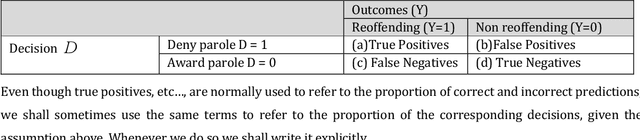

In this paper, we provide a moral analysis of two criteria of statistical fairness debated in the machine learning literature: 1) calibration between groups and 2) equality of false positive and false negative rates between groups. In our paper, we focus on moral arguments in support of either measure. The conflict between group calibration vs. false positive and false negative rate equality is one of the core issues in the debate about group fairness definitions among practitioners. For any thorough moral analysis, the meaning of the term fairness has to be made explicit and defined properly. For our paper, we equate fairness with (non-)discrimination, which is a legitimate understanding in the discussion about group fairness. More specifically, we equate it with prima facie wrongful discrimination in the sense this is used in Prof. Lippert-Rasmussen's treatment of this definition. In this paper, we argue that a violation of group calibration may be unfair in some cases, but not unfair in others. This is in line with claims already advanced in the literature, that algorithmic fairness should be defined in a way that is sensitive to context. The most important practical implication is that arguments based on examples in which fairness requires between-group calibration, or equality in the false-positive/false-negative rates, do no generalize. For it may be that group calibration is a fairness requirement in one case, but not in another.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge