Interpreting the Latent Space of GANs via Correlation Analysis for Controllable Concept Manipulation

Paper and Code

May 23, 2020

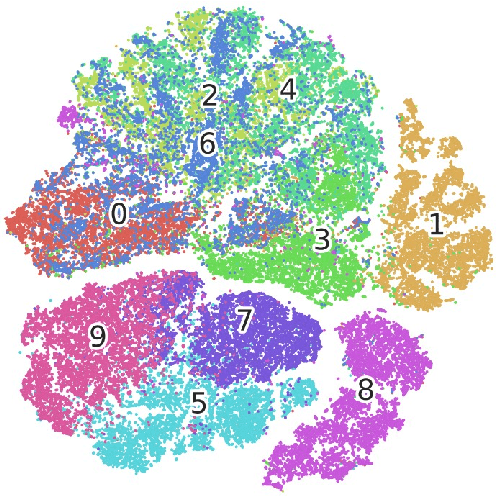

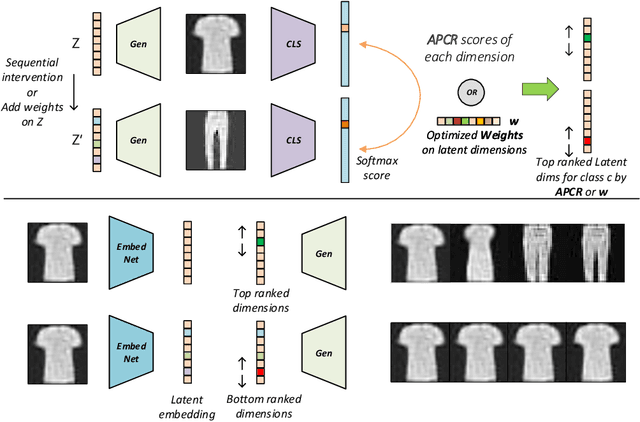

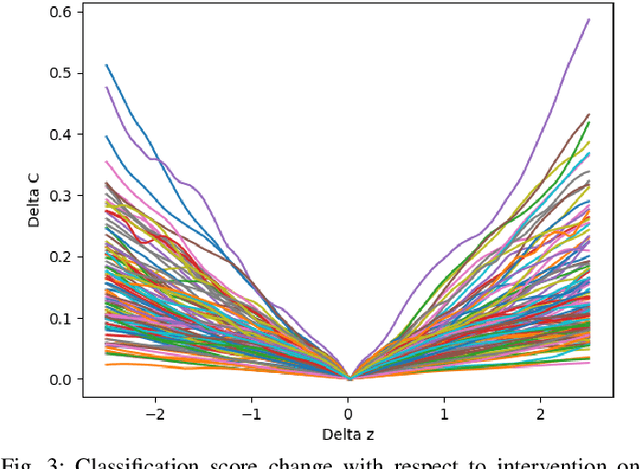

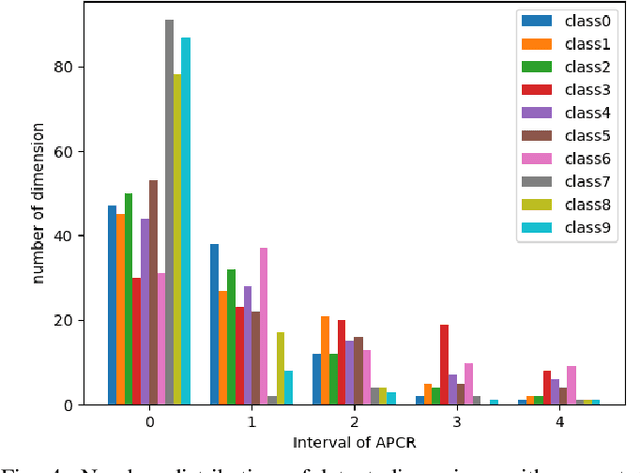

Generative adversarial nets (GANs) have been successfully applied in many fields like image generation, inpainting, super-resolution and drug discovery, etc., by now, the inner process of GANs is far from been understood. To get deeper insight of the intrinsic mechanism of GANs, in this paper, a method for interpreting the latent space of GANs by analyzing the correlation between latent variables and the corresponding semantic contents in generated images is proposed. Unlike previous methods that focus on dissecting models via feature visualization, the emphasis of this work is put on the variables in latent space, i.e. how the latent variables affect the quantitative analysis of generated results. Given a pretrained GAN model with weights fixed, the latent variables are intervened to analyze their effect on the semantic content in generated images. A set of controlling latent variables can be derived for specific content generation, and the controllable semantic content manipulation be achieved. The proposed method is testified on the datasets Fashion-MNIST and UT Zappos50K, experiment results show its effectiveness.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge