Interpreting Arabic Transformer Models

Paper and Code

Jan 19, 2022

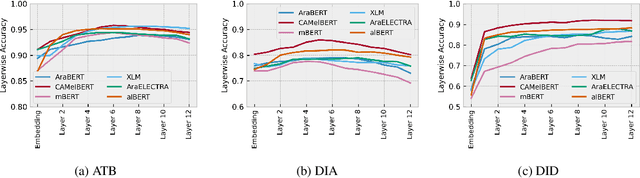

Arabic is a Semitic language which is widely spoken with many dialects. Given the success of pre-trained language models, many transformer models trained on Arabic and its dialects have surfaced. While these models have been compared with respect to downstream NLP tasks, no evaluation has been carried out to directly compare the internal representations. We probe how linguistic information is encoded in Arabic pretrained models, trained on different varieties of Arabic language. We perform a layer and neuron analysis on the models using three intrinsic tasks: two morphological tagging tasks based on MSA (modern standard Arabic) and dialectal POS-tagging and a dialectal identification task. Our analysis enlightens interesting findings such as: i) word morphology is learned at the lower and middle layers ii) dialectal identification necessitate more knowledge and hence preserved even in the final layers, iii) despite a large overlap in their vocabulary, the MSA-based models fail to capture the nuances of Arabic dialects, iv) we found that neurons in embedding layers are polysemous in nature, while the neurons in middle layers are exclusive to specific properties.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge