Instance-based Transfer Learning for Multilingual Deep Retrieval

Paper and Code

Nov 08, 2019

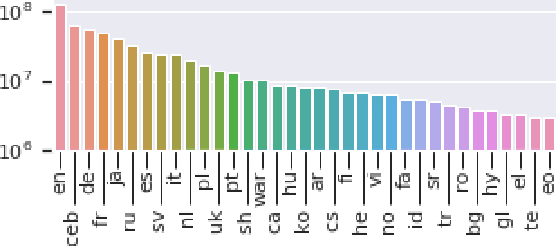

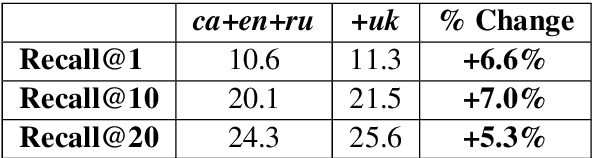

Perhaps the simplest type of multilingual transfer learning is instance-based transfer learning, in which data from the target language and the auxiliary languages are pooled, and a single model is learned from the pooled data. It is not immediately obvious when instance-based transfer learning will improve performance in this multilingual setting: for instance, a plausible conjecture is this kind of transfer learning would help only if the auxiliary languages were very similar to the target. Here we show that at large scale, this method is surprisingly effective, leading to positive transfer on all of 35 target languages we tested. We analyze this improvement and argue that the most natural explanation, namely direct vocabulary overlap between languages, only partially explains the performance gains: in fact, we demonstrate target-language improvement can occur after adding data from an auxiliary language with no vocabulary in common with the target. This surprising result is due to the effect of transitive vocabulary overlaps between pairs of auxiliary and target languages.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge