Inferring Convolutional Neural Networks' accuracies from their architectural characterizations

Paper and Code

Jan 10, 2020

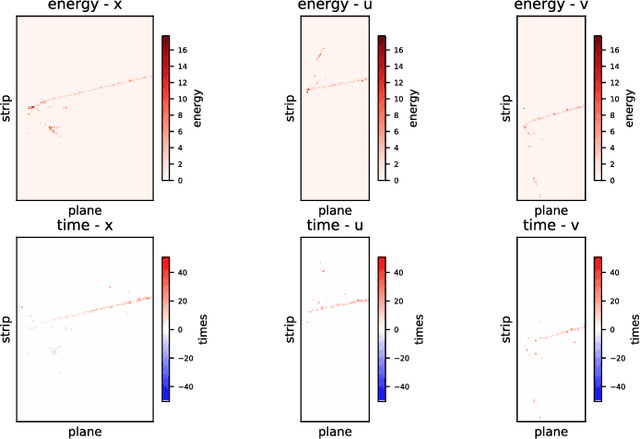

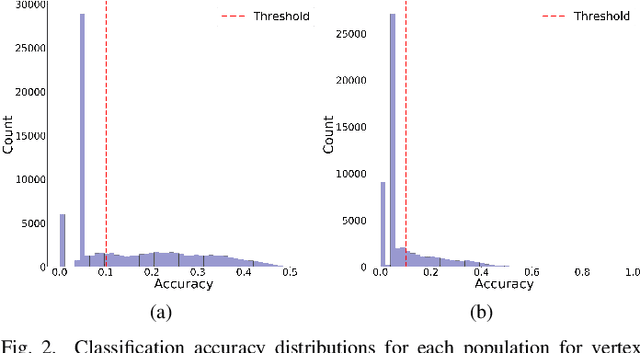

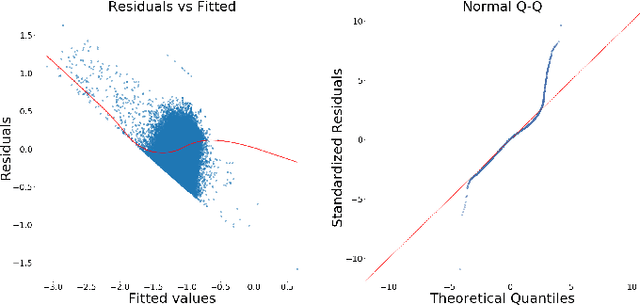

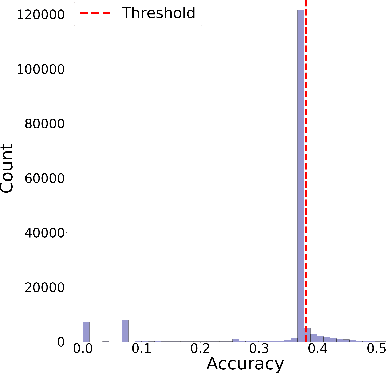

Convolutional Neural Networks (CNNs) have shown strong promise for analyzing scientific data from many domains including particle imaging detectors. However, the challenge of choosing the appropriate network architecture (depth, kernel shapes, activation functions, etc.) for specific applications and different data sets is still poorly understood. In this paper, we study the relationships between a CNN's architecture and its performance by proposing a systematic language that is useful for comparison between different CNN's architectures before training time. We characterize CNN's architecture by different attributes, and demonstrate that the attributes can be predictive of the networks' performance in two specific computer vision-based physics problems -- event vertex finding and hadron multiplicity classification in the MINERvA experiment at Fermi National Accelerator Laboratory. In doing so, we extract several architectural attributes from optimized networks' architecture for the physics problems, which are outputs of a model selection algorithm called Multi-node Evolutionary Neural Networks for Deep Learning (MENNDL). We use machine learning models to predict whether a network can perform better than a certain threshold accuracy before training. The models perform 16-20% better than random guessing. Additionally, we found an coefficient of determination of 0.966 for an Ordinary Least Squares model in a regression on accuracy over a large population of networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge