Incremental Learning of Discrete Planning Domains from Continuous Perceptions

Paper and Code

Mar 14, 2019

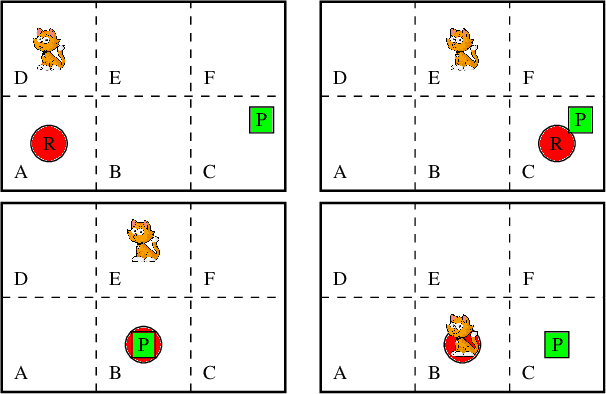

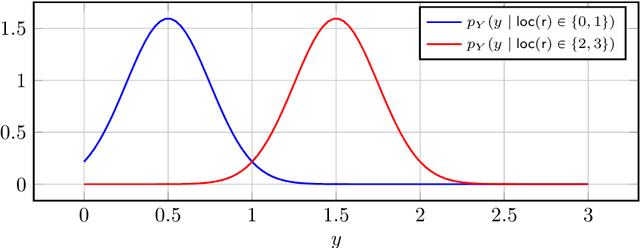

We propose a framework for learning discrete deterministic planning domains. In this framework, an agent learns the domain by observing the action effects through continuous features that describe the state of the environment after the execution of each action. Besides, the agent learns its perception function, i.e., a probabilistic mapping between state variables and sensor data represented as a vector of continuous random variables called perception variables. We define an algorithm that updates the planning domain and the perception function by (i) introducing new states, either by extending the possible values of state variables, or by weakening their constraints; (ii) adapts the perception function to fit the observed data (iii) adapts the transition function on the basis of the executed actions and the effects observed via the perception function. The framework is able to deal with exogenous events that happen in the environment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge