Incentive Mechanism for Privacy-Preserving Federated Learning

Paper and Code

Jun 08, 2021

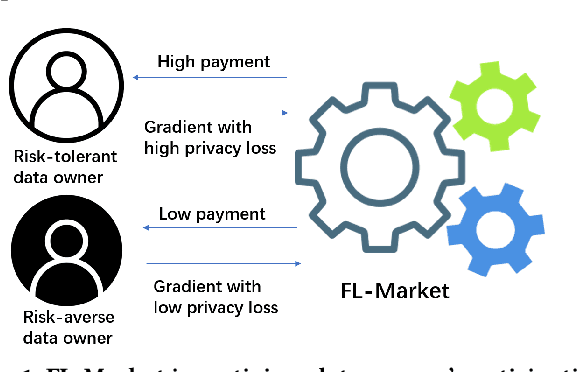

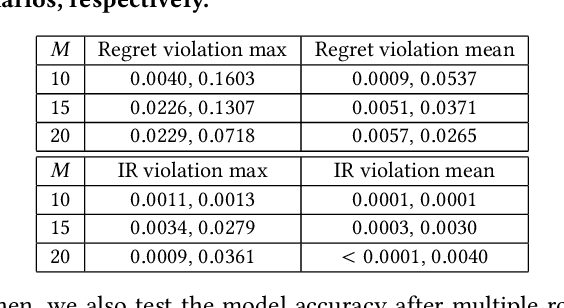

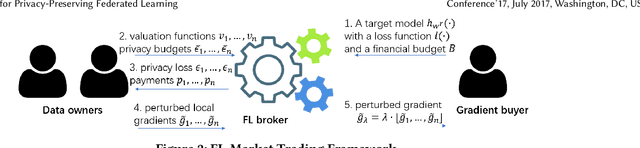

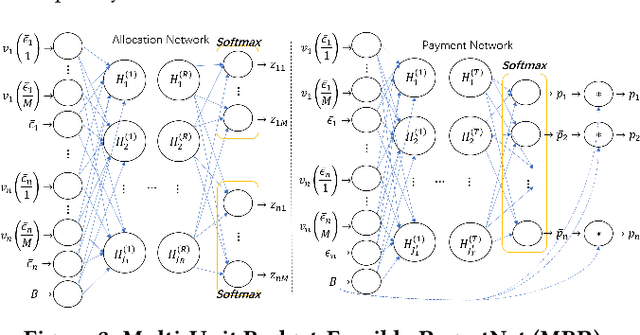

Federated learning (FL) is an emerging paradigm for machine learning, in which data owners can collaboratively train a model by sharing gradients instead of their raw data. Two fundamental research problems in FL are incentive mechanism and privacy protection. The former focuses on how to incentivize data owners to participate in FL. The latter studies how to protect data owners' privacy while maintaining high utility of trained models. However, incentive mechanism and privacy protection in FL have been studied separately and no work solves both problems at the same time. In this work, we address the two problems simultaneously by an FL-Market that incentivizes data owners' participation by providing appropriate payments and privacy protection. FL-Market enables data owners to obtain compensation according to their privacy loss quantified by local differential privacy (LDP). Our insight is that, by meeting data owners' personalized privacy preferences and providing appropriate payments, we can (1) incentivize privacy risk-tolerant data owners to set larger privacy parameters (i.e., gradients with less noise) and (2) provide preferred privacy protection for privacy risk-averse data owners. To achieve this, we design a personalized LDP-based FL framework with a deep learning-empowered auction mechanism for incentivizing trading gradients with less noise and optimal aggregation mechanisms for model updates. Our experiments verify the effectiveness of the proposed framework and mechanisms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge