In-situ Stochastic Training of MTJ Crossbar based Neural Networks

Paper and Code

Jun 24, 2018

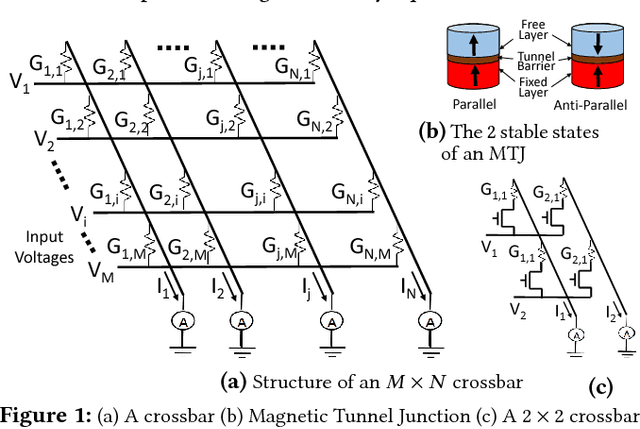

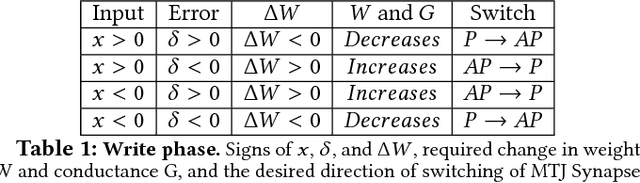

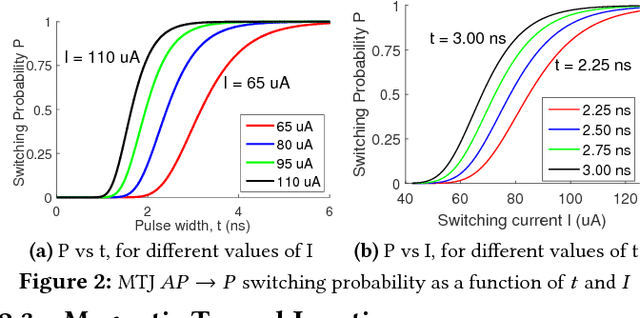

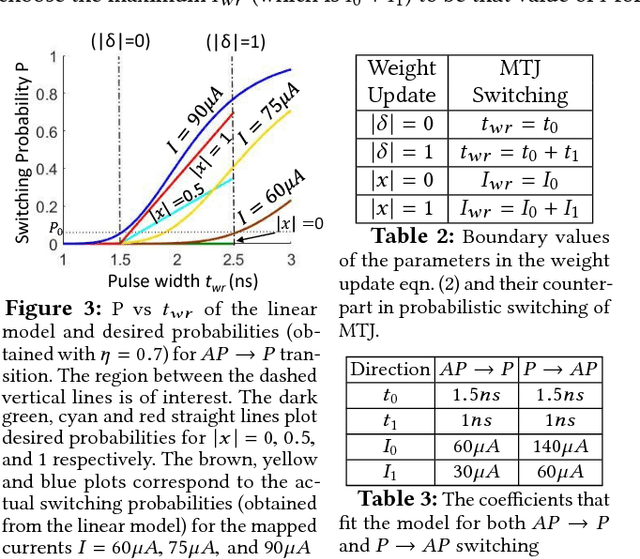

Owing to high device density, scalability and non-volatility, Magnetic Tunnel Junction-based crossbars have garnered significant interest for implementing the weights of an artificial neural network. The existence of only two stable states in MTJs implies a high overhead of obtaining optimal binary weights in software. We illustrate that the inherent parallelism in the crossbar structure makes it highly appropriate for in-situ training, wherein the network is taught directly on the hardware. It leads to significantly smaller training overhead as the training time is independent of the size of the network, while also circumventing the effects of alternate current paths in the crossbar and accounting for manufacturing variations in the device. We show how the stochastic switching characteristics of MTJs can be leveraged to perform probabilistic weight updates using the gradient descent algorithm. We describe how the update operations can be performed on crossbars both with and without access transistors and perform simulations on them to demonstrate the effectiveness of our techniques. The results reveal that stochastically trained MTJ-crossbar NNs achieve a classification accuracy nearly same as that of real-valued-weight networks trained in software and exhibit immunity to device variations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge