Improving Novelty Detection using the Reconstructions of Nearest Neighbours

Paper and Code

Nov 11, 2021

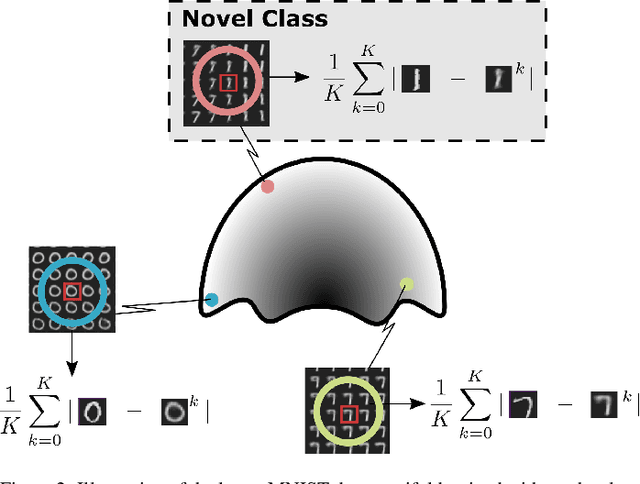

We show that using nearest neighbours in the latent space of autoencoders (AE) significantly improves performance of semi-supervised novelty detection in both single and multi-class contexts. Autoencoding methods detect novelty by learning to differentiate between the non-novel training class(es) and all other unseen classes. Our method harnesses a combination of the reconstructions of the nearest neighbours and the latent-neighbour distances of a given input's latent representation. We demonstrate that our nearest-latent-neighbours (NLN) algorithm is memory and time efficient, does not require significant data augmentation, nor is reliant on pre-trained networks. Furthermore, we show that the NLN-algorithm is easily applicable to multiple datasets without modification. Additionally, the proposed algorithm is agnostic to autoencoder architecture and reconstruction error method. We validate our method across several standard datasets for a variety of different autoencoding architectures such as vanilla, adversarial and variational autoencoders using either reconstruction, residual or feature consistent losses. The results show that the NLN algorithm grants up to a 17% increase in Area Under the Receiver Operating Characteristics (AUROC) curve performance for the multi-class case and 8% for single-class novelty detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge