Improved Differentially Private Decentralized Source Separation for fMRI Data

Paper and Code

Oct 28, 2019

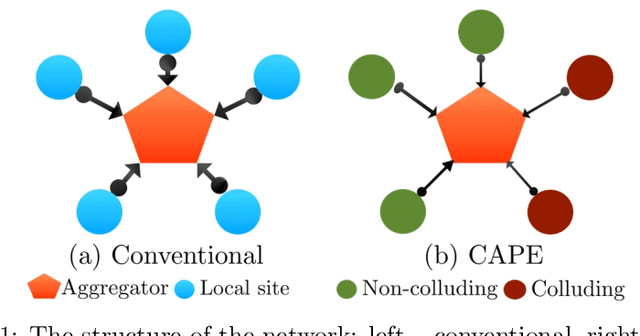

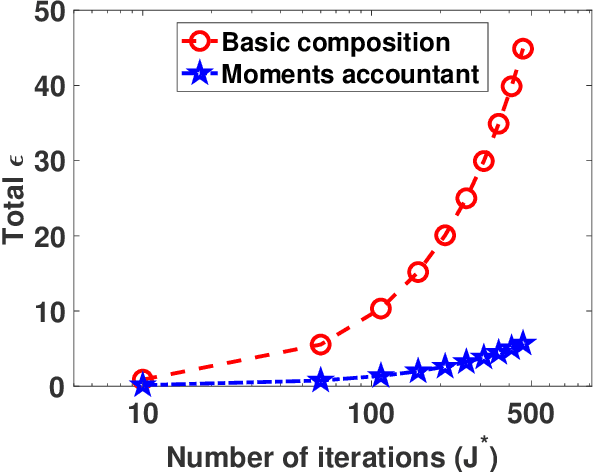

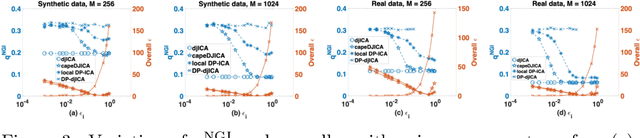

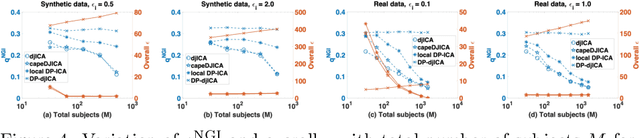

Blind source separation algorithms such as independent component analysis (ICA) are widely used in the analysis of neuroimaging data. In order to leverage larger sample sizes, different data holders/sites may wish to collaboratively learn feature representations. However, such datasets are often privacy-sensitive, precluding centralized analyses that pool the data at a single site. A recently proposed algorithm uses message-passing between sites and a central aggregator to perform a decentralized joint ICA (djICA) without sharing the data. However, this method does not satisfy formal privacy guarantees. We propose a differentially private algorithm for performing ICA in a decentralized data setting. Differential privacy provides a formal and mathematically rigorous privacy guarantee by introducing noise into the messages. Conventional approaches to decentralized differentially private algorithms may require too much noise due to the typically small sample sizes at each site. We leverage a recently proposed correlated noise protocol to remedy the excessive noise problem of the conventional schemes. We investigate the performance of the proposed algorithm on synthetic and real fMRI datasets to show that our algorithm outperforms existing approaches and can sometimes reach the same level of utility as the corresponding non-private algorithm. This indicates that it is possible to have meaningful utility while preserving privacy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge