Imposing Gaussian Pre-Activations in a Neural Network

Paper and Code

May 24, 2022

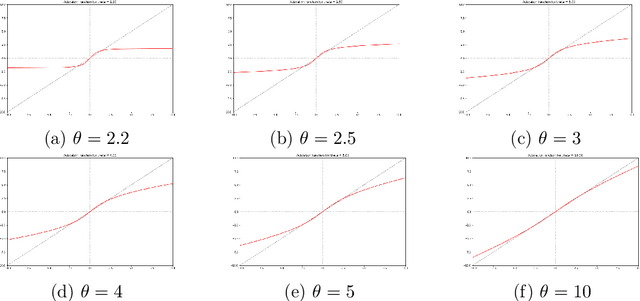

The goal of the present work is to propose a way to modify both the initialization distribution of the weights of a neural network and its activation function, such that all pre-activations are Gaussian. We propose a family of pairs initialization/activation, where the activation functions span a continuum from bounded functions (such as Heaviside or tanh) to the identity function. This work is motivated by the contradiction between existing works dealing with Gaussian pre-activations: on one side, the works in the line of the Neural Tangent Kernels and the Edge of Chaos are assuming it, while on the other side, theoretical and experimental results challenge this hypothesis. The family of pairs initialization/activation we are proposing will help us to answer this hot question: is it desirable to have Gaussian pre-activations in a neural network?

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge