Image-level Regression for Uncertainty-aware Retinal Image Segmentation

Paper and Code

May 27, 2024

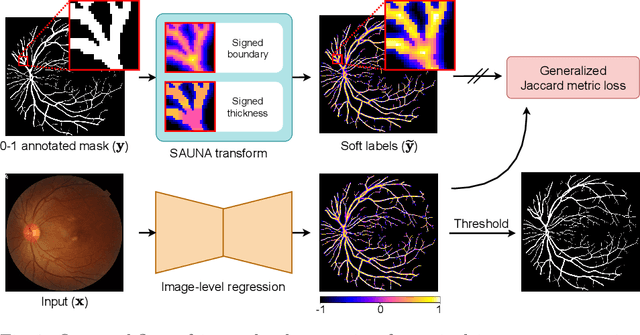

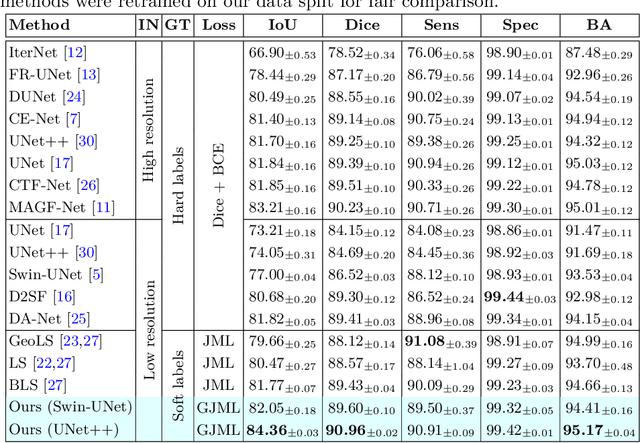

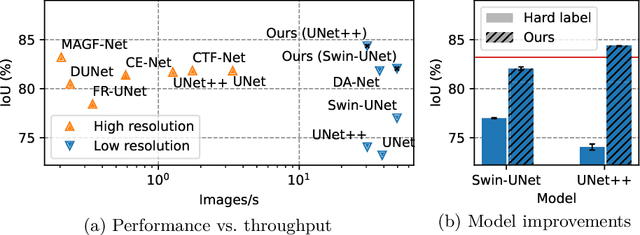

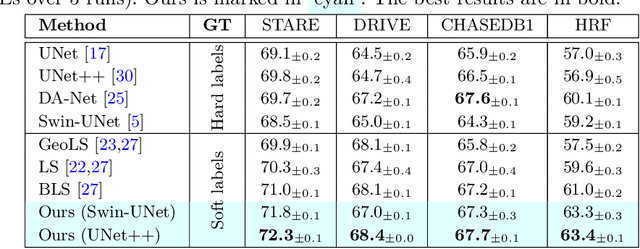

Accurate retinal vessel segmentation is a crucial step in the quantitative assessment of retinal vasculature, which is needed for the early detection of retinal diseases and other conditions. Numerous studies have been conducted to tackle the problem of segmenting vessels automatically using a pixel-wise classification approach. The common practice of creating ground truth labels is to categorize pixels as foreground and background. This approach is, however, biased, and it ignores the uncertainty of a human annotator when it comes to annotating e.g. thin vessels. In this work, we propose a simple and effective method that casts the retinal image segmentation task as an image-level regression. For this purpose, we first introduce a novel Segmentation Annotation Uncertainty-Aware (SAUNA) transform, which adds pixel uncertainty to the ground truth using the pixel's closeness to the annotation boundary and vessel thickness. To train our model with soft labels, we generalize the earlier proposed Jaccard metric loss to arbitrary hypercubes, which is a second contribution of this work. The proposed SAUNA transform and the new theoretical results allow us to directly train a standard U-Net-like architecture at the image level, outperforming all recently published methods. We conduct thorough experiments and compare our method to a diverse set of baselines across 5 retinal image datasets. Our implementation is available at \url{https://github.com/Oulu-IMEDS/SAUNA}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge