ICANet: A Method of Short Video Emotion Recognition Driven by Multimodal Data

Paper and Code

Aug 24, 2022

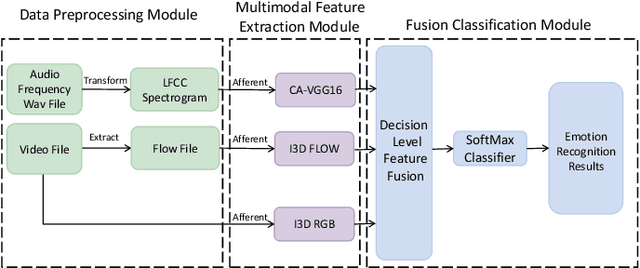

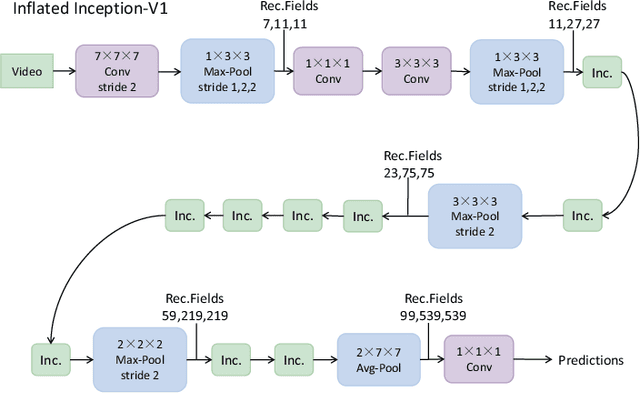

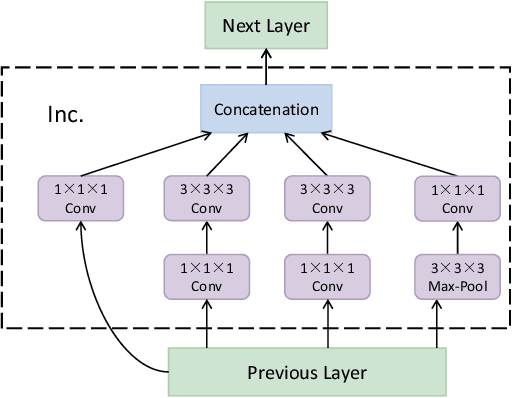

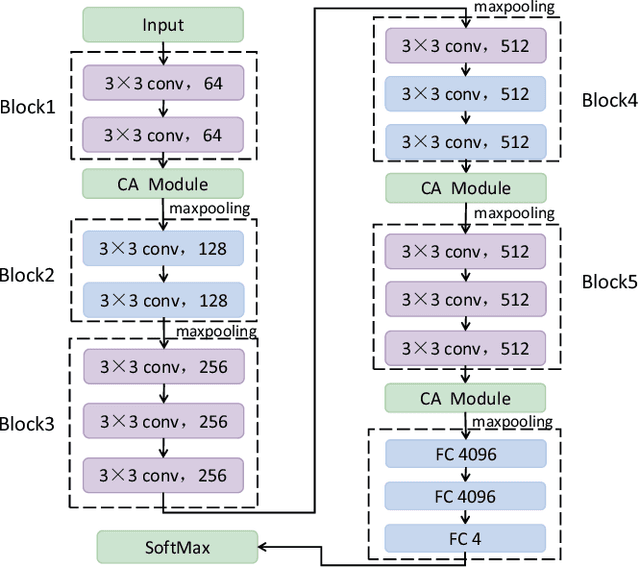

With the fast development of artificial intelligence and short videos, emotion recognition in short videos has become one of the most important research topics in human-computer interaction. At present, most emotion recognition methods still stay in a single modality. However, in daily life, human beings will usually disguise their real emotions, which leads to the problem that the accuracy of single modal emotion recognition is relatively terrible. Moreover, it is not easy to distinguish similar emotions. Therefore, we propose a new approach denoted as ICANet to achieve multimodal short video emotion recognition by employing three different modalities of audio, video and optical flow, making up for the lack of a single modality and then improving the accuracy of emotion recognition in short videos. ICANet has a better accuracy of 80.77% on the IEMOCAP benchmark, exceeding the SOTA methods by 15.89%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge