Hypothesis Testing Prompting Improves Deductive Reasoning in Large Language Models

Paper and Code

May 09, 2024

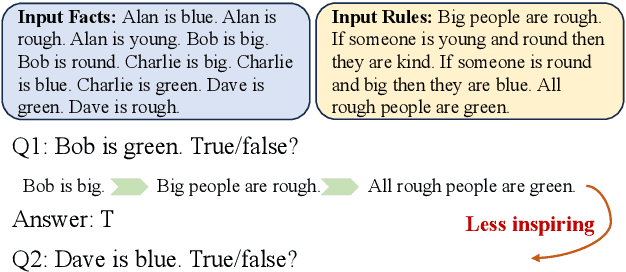

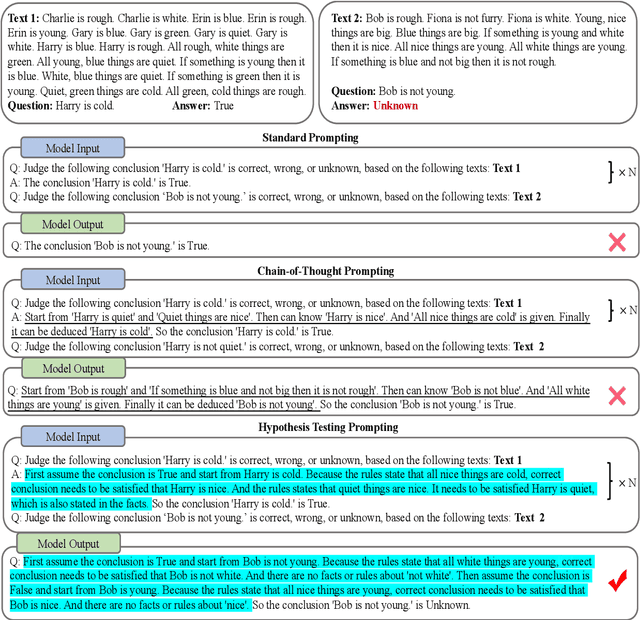

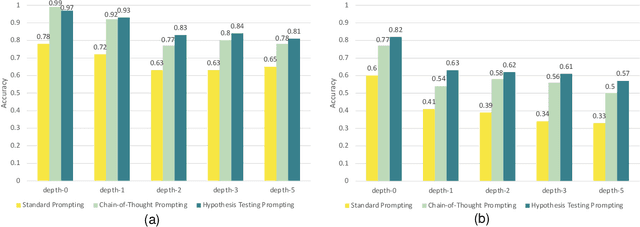

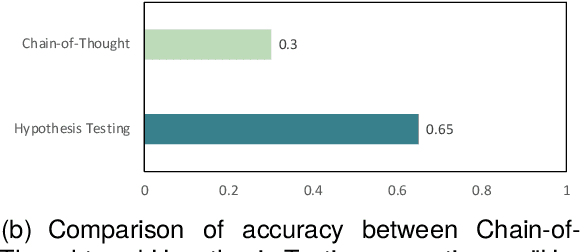

Combining different forms of prompts with pre-trained large language models has yielded remarkable results on reasoning tasks (e.g. Chain-of-Thought prompting). However, along with testing on more complex reasoning, these methods also expose problems such as invalid reasoning and fictional reasoning paths. In this paper, we develop \textit{Hypothesis Testing Prompting}, which adds conclusion assumptions, backward reasoning, and fact verification during intermediate reasoning steps. \textit{Hypothesis Testing prompting} involves multiple assumptions and reverses validation of conclusions leading to its unique correct answer. Experiments on two challenging deductive reasoning datasets ProofWriter and RuleTaker show that hypothesis testing prompting not only significantly improves the effect, but also generates a more reasonable and standardized reasoning process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge