Hyperspherically Regularized Networks for BYOL Improves Feature Uniformity and Separability

Paper and Code

Apr 29, 2021

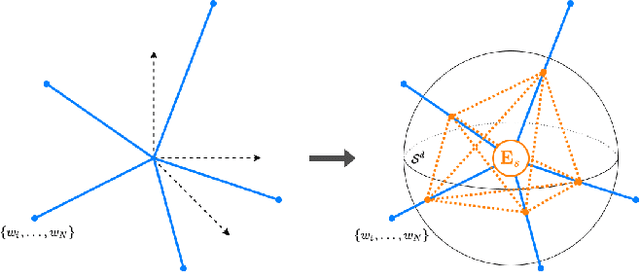

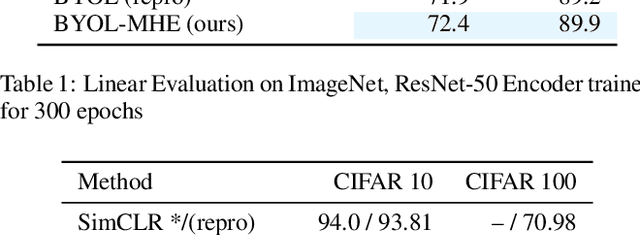

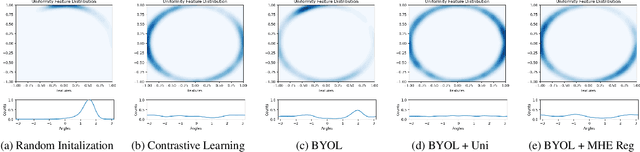

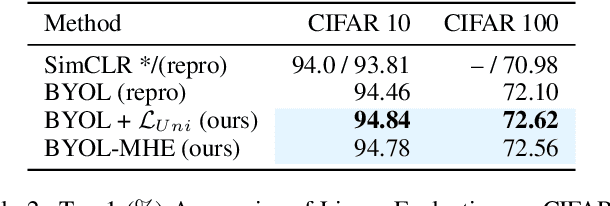

Bootstrap Your Own Latent (BYOL) introduced an approach to self-supervised learning avoiding the contrastive paradigm and subsequently removing the computational burden of negative sampling. However, feature representations under this paradigm are poorly distributed on the surface of the unit-hypersphere representation space compared to contrastive methods. This work empirically demonstrates that feature diversity enforced by contrastive losses is beneficial when employed in BYOL, and as such, provides greater inter-class feature separability. Therefore to achieve a more uniform distribution of features, we advocate the minimization of hyperspherical energy (i.e. maximization of entropy) in BYOL network weights. We show that directly optimizing a measure of uniformity alongside the standard loss, or regularizing the networks of the BYOL architecture to minimize the hyperspherical energy of neurons can produce more uniformly distributed and better performing representations for downstream tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge