HyObscure: Hybrid Obscuring for Privacy-Preserving Data Publishing

Paper and Code

Dec 15, 2021

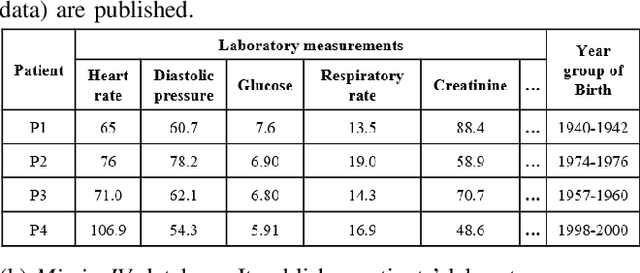

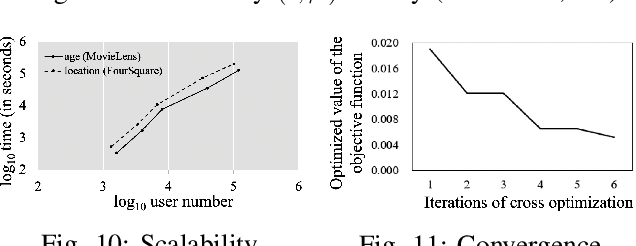

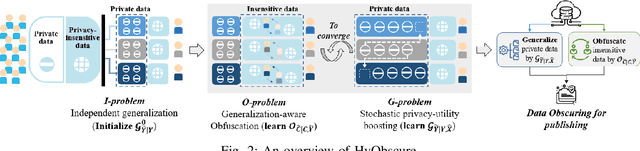

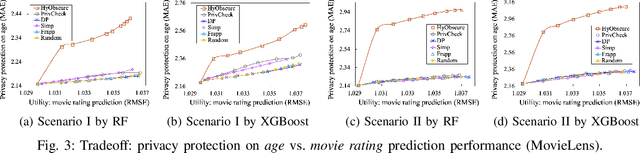

Minimizing privacy leakage while ensuring data utility is a critical problem to data holders in a privacy-preserving data publishing task. Most prior research concerns only with one type of data and resorts to a single obscuring method, \eg, obfuscation or generalization, to achieve a privacy-utility tradeoff, which is inadequate for protecting real-life heterogeneous data and is hard to defend ever-growing machine learning based inference attacks. This work takes a pilot study on privacy-preserving data publishing when both generalization and obfuscation operations are employed for heterogeneous data protection. To this end, we first propose novel measures for privacy and utility quantification and formulate the hybrid privacy-preserving data obscuring problem to account for the joint effect of generalization and obfuscation. We then design a novel hybrid protection mechanism called HyObscure, to cross-iteratively optimize the generalization and obfuscation operations for maximum privacy protection under a certain utility guarantee. The convergence of the iterative process and the privacy leakage bound of HyObscure are also provided in theory. Extensive experiments demonstrate that HyObscure significantly outperforms a variety of state-of-the-art baseline methods when facing various inference attacks under different scenarios. HyObscure also scales linearly to the data size and behaves robustly with varying key parameters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge