Hybrid PS-V Technique: A Novel Sensor Fusion Approach for Fast Mobile Eye-Tracking with Sensor-Shift Aware Correction

Paper and Code

Jul 19, 2017

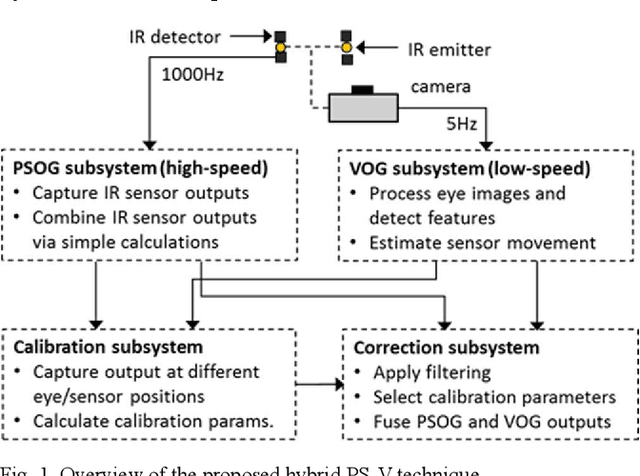

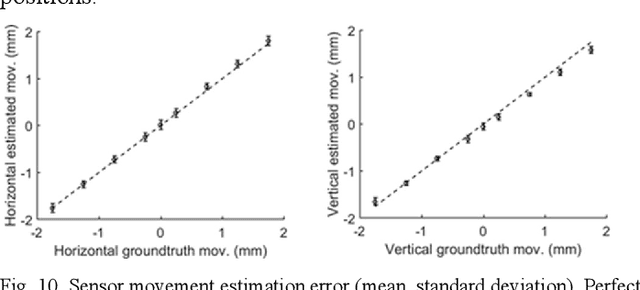

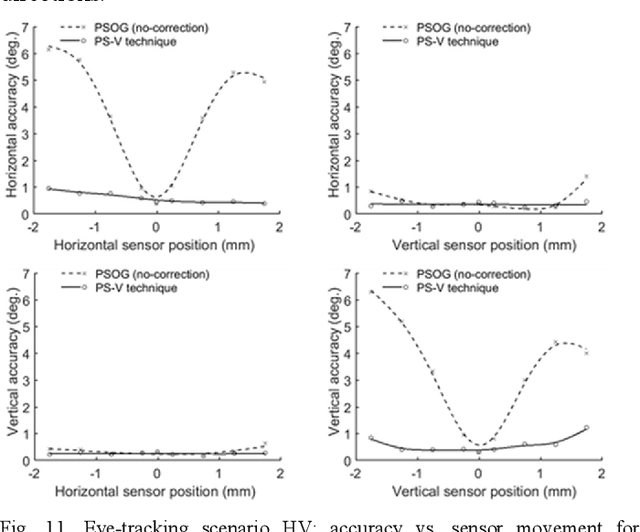

This paper introduces and evaluates a hybrid technique that fuses efficiently the eye-tracking principles of photosensor oculography (PSOG) and video oculography (VOG). The main concept of this novel approach is to use a few fast and power-economic photosensors as the core mechanism for performing high speed eye-tracking, whereas in parallel, use a video sensor operating at low sampling-rate (snapshot mode) to perform dead-reckoning error correction when sensor movements occur. In order to evaluate the proposed method, we simulate the functional components of the technique and present our results in experimental scenarios involving various combinations of horizontal and vertical eye and sensor movements. Our evaluation shows that the developed technique can be used to provide robustness to sensor shifts that otherwise could induce error larger than 5 deg. Our analysis suggests that the technique can potentially enable high speed eye-tracking at low power profiles, making it suitable to be used in emerging head-mounted devices, e.g. AR/VR headsets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge