HiFiHR: Enhancing 3D Hand Reconstruction from a Single Image via High-Fidelity Texture

Paper and Code

Aug 25, 2023

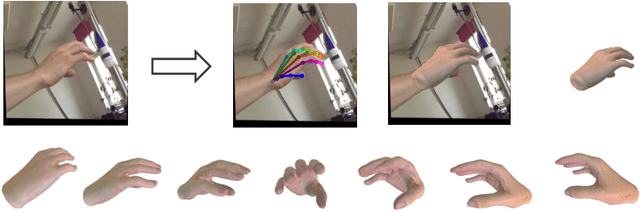

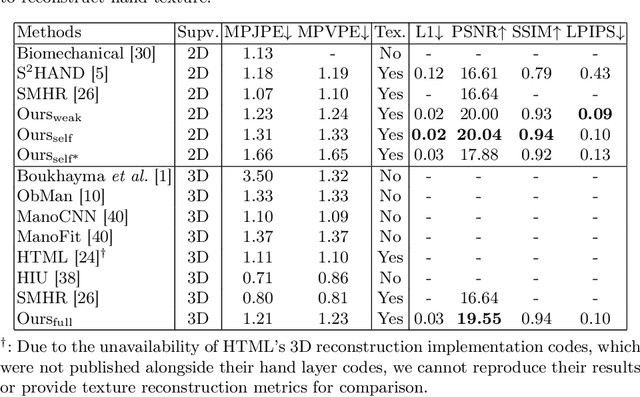

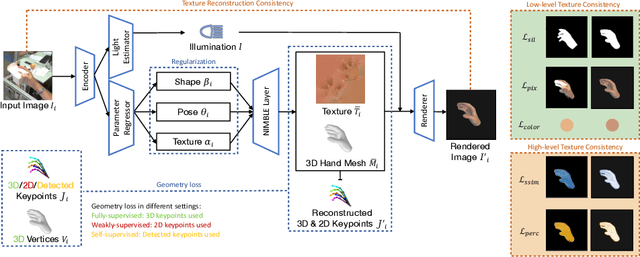

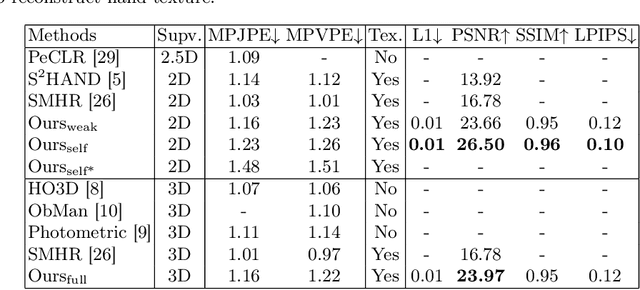

We present HiFiHR, a high-fidelity hand reconstruction approach that utilizes render-and-compare in the learning-based framework from a single image, capable of generating visually plausible and accurate 3D hand meshes while recovering realistic textures. Our method achieves superior texture reconstruction by employing a parametric hand model with predefined texture assets, and by establishing a texture reconstruction consistency between the rendered and input images during training. Moreover, based on pretraining the network on an annotated dataset, we apply varying degrees of supervision using our pipeline, i.e., self-supervision, weak supervision, and full supervision, and discuss the various levels of contributions of the learned high-fidelity textures in enhancing hand pose and shape estimation. Experimental results on public benchmarks including FreiHAND and HO-3D demonstrate that our method outperforms the state-of-the-art hand reconstruction methods in texture reconstruction quality while maintaining comparable accuracy in pose and shape estimation. Our code is available at https://github.com/viridityzhu/HiFiHR.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge