Hate Speech Criteria: A Modular Approach to Task-Specific Hate Speech Definitions

Paper and Code

Jun 30, 2022

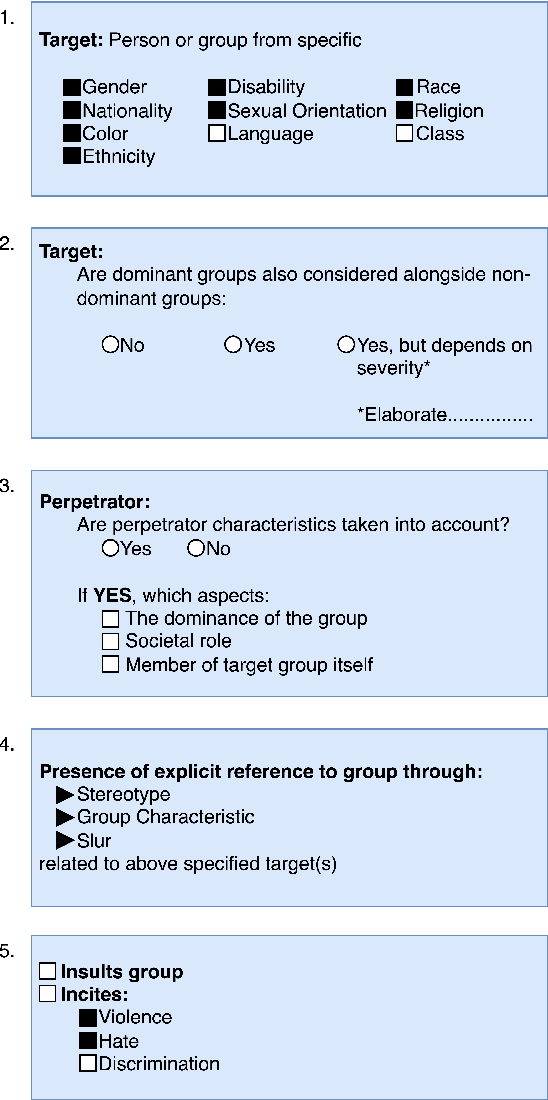

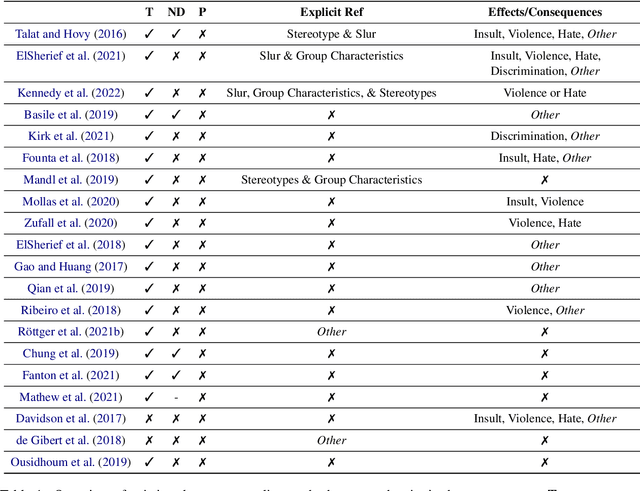

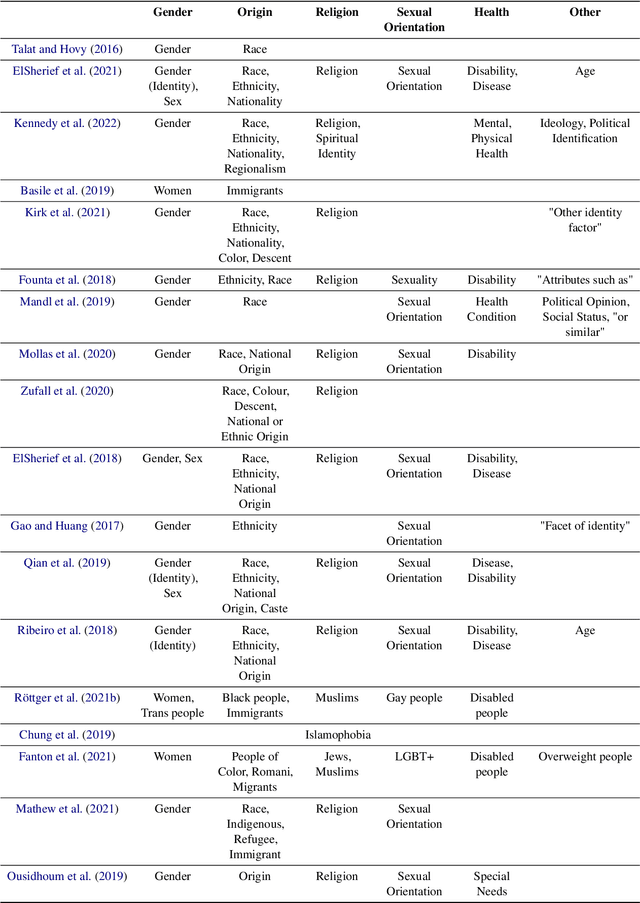

\textbf{Offensive Content Warning}: This paper contains offensive language only for providing examples that clarify this research and do not reflect the authors' opinions. Please be aware that these examples are offensive and may cause you distress. The subjectivity of recognizing \textit{hate speech} makes it a complex task. This is also reflected by different and incomplete definitions in NLP. We present \textit{hate speech} criteria, developed with perspectives from law and social science, with the aim of helping researchers create more precise definitions and annotation guidelines on five aspects: (1) target groups, (2) dominance, (3) perpetrator characteristics, (4) type of negative group reference, and the (5) type of potential consequences/effects. Definitions can be structured so that they cover a more broad or more narrow phenomenon. As such, conscious choices can be made on specifying criteria or leaving them open. We argue that the goal and exact task developers have in mind should determine how the scope of \textit{hate speech} is defined. We provide an overview of the properties of English datasets from \url{hatespeechdata.com} that may help select the most suitable dataset for a specific scenario.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge