H-vmunet: High-order Vision Mamba UNet for Medical Image Segmentation

Paper and Code

Mar 20, 2024

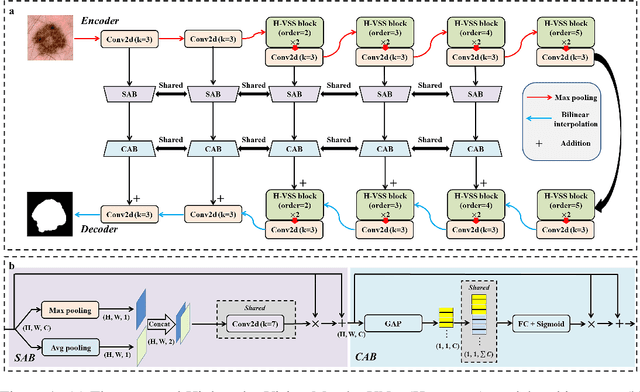

In the field of medical image segmentation, variant models based on Convolutional Neural Networks (CNNs) and Visual Transformers (ViTs) as the base modules have been very widely developed and applied. However, CNNs are often limited in their ability to deal with long sequences of information, while the low sensitivity of ViTs to local feature information and the problem of secondary computational complexity limit their development. Recently, the emergence of state-space models (SSMs), especially 2D-selective-scan (SS2D), has had an impact on the longtime dominance of traditional CNNs and ViTs as the foundational modules of visual neural networks. In this paper, we extend the adaptability of SS2D by proposing a High-order Vision Mamba UNet (H-vmunet) for medical image segmentation. Among them, the proposed High-order 2D-selective-scan (H-SS2D) progressively reduces the introduction of redundant information during SS2D operations through higher-order interactions. In addition, the proposed Local-SS2D module improves the learning ability of local features of SS2D at each order of interaction. We conducted comparison and ablation experiments on three publicly available medical image datasets (ISIC2017, Spleen, and CVC-ClinicDB), and the results all demonstrate the strong competitiveness of H-vmunet in medical image segmentation tasks. The code is available from https://github.com/wurenkai/H-vmunet .

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge