GRIm-RePR: Prioritising Generating Important Features for Pseudo-Rehearsal

Paper and Code

Nov 27, 2019

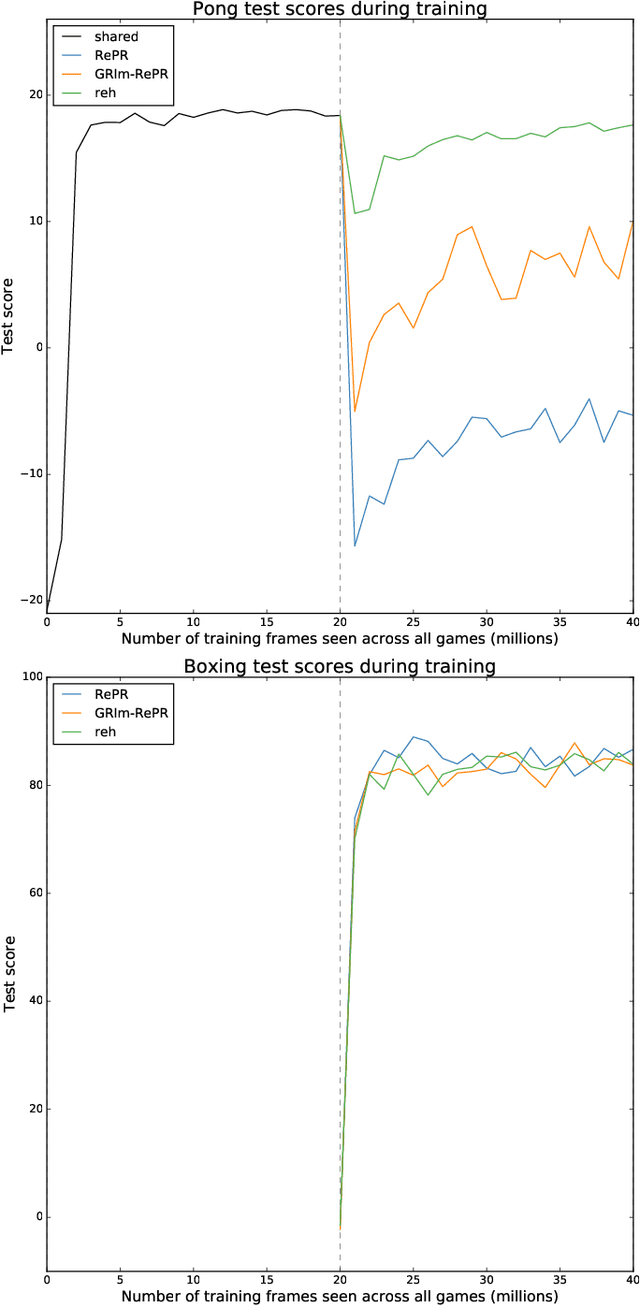

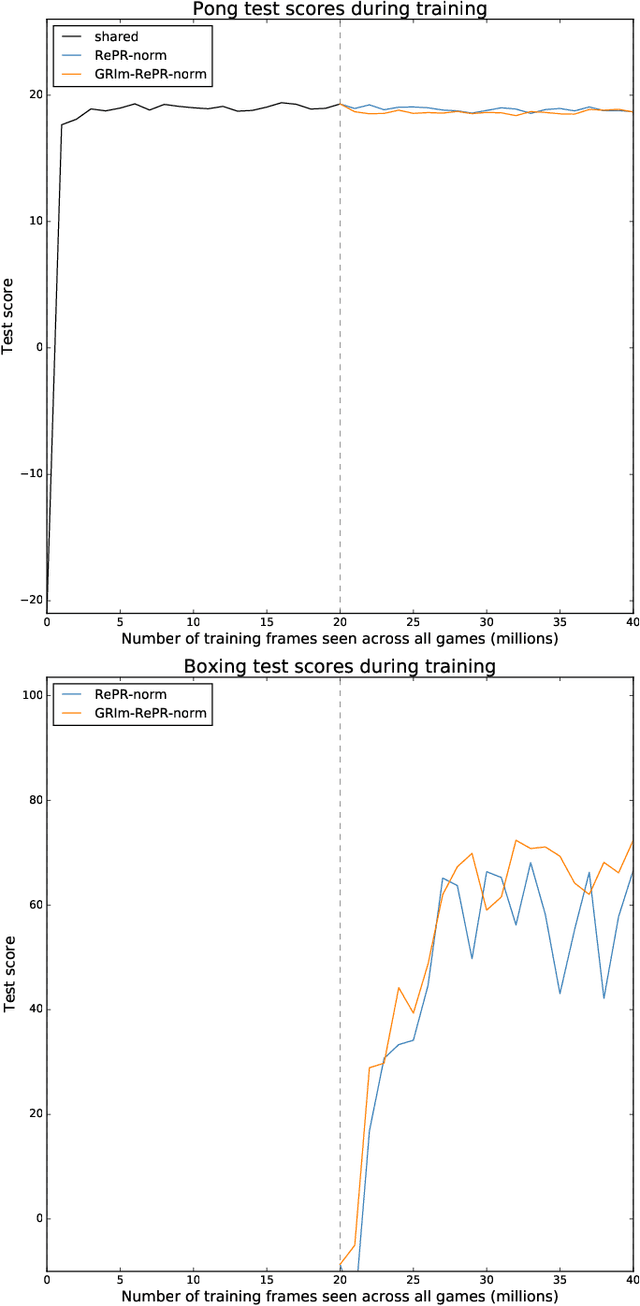

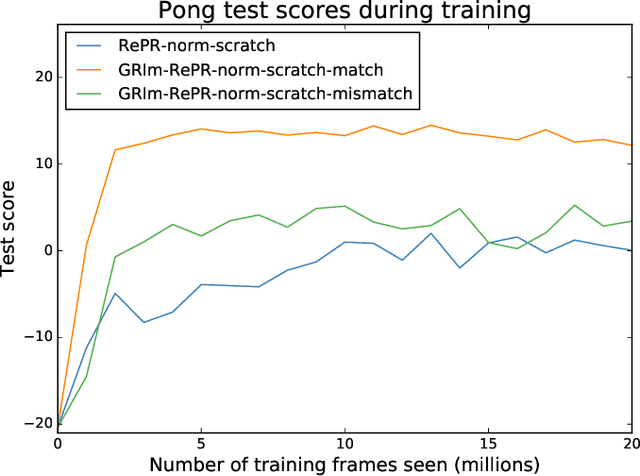

Pseudo-rehearsal allows neural networks to learn a sequence of tasks without forgetting how to perform in earlier tasks. Preventing forgetting is achieved by introducing a generative network which can produce data from previously seen tasks so that it can be rehearsed along side learning the new task. This has been found to be effective in both supervised and reinforcement learning. Our current work aims to further prevent forgetting by encouraging the generator to accurately generate features important for task retention. More specifically, the generator is improved by introducing a second discriminator into the Generative Adversarial Network which learns to classify between real and fake items from the intermediate activation patterns that they produce when fed through a continual learning agent. Using Atari 2600 games, we experimentally find that improving the generator can considerably reduce catastrophic forgetting compared to the standard pseudo-rehearsal methods used in deep reinforcement learning. Furthermore, we propose normalising the Q-values taught to the long-term system as we observe this substantially reduces catastrophic forgetting by minimising the interference between tasks' reward functions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge