Graph Representation Learning by Ensemble Aggregating Subgraphs via Mutual Information Maximization

Paper and Code

Mar 24, 2021

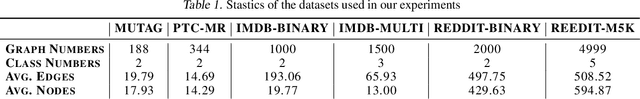

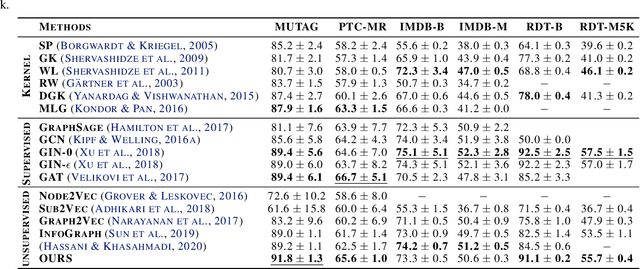

Graph Neural Networks have shown tremendous potential on dealing with garph data and achieved outstanding results in recent years. In some research areas, labelling data are hard to obtain for technical reasons, which necessitates the study of unsupervised and semi-superivsed learning on graphs. Therefore, whether the learned representations can capture the intrinsic feature of the original graphs will be the issue in this area. In this paper, we introduce a self-supervised learning method to enhance the representations of graph-level learned by Graph Neural Networks. To fully capture the original attributes of the graph, we use three information aggregators: attribute-conv, layer-conv and subgraph-conv to gather information from different aspects. To get a comprehensive understanding of the graph structure, we propose an ensemble-learning like subgraph method. And to achieve efficient and effective contrasive learning, a Head-Tail contrastive samples construction method is proposed to provide more abundant negative samples. By virtue of all proposed components which can be generalized to any Graph Neural Networks, in unsupervised case, we achieve new state of the art results in several benchmarks. We also evaluate our model on semi-supervised learning tasks and make a fair comparison to state of the art semi-supervised methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge