GPTKB: Building Very Large Knowledge Bases from Language Models

Paper and Code

Nov 07, 2024

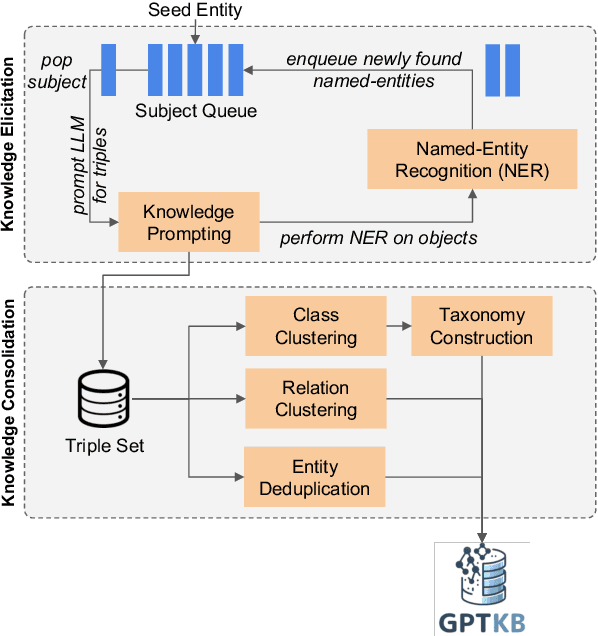

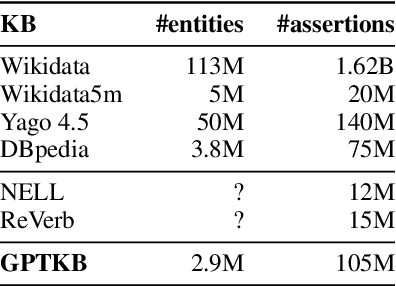

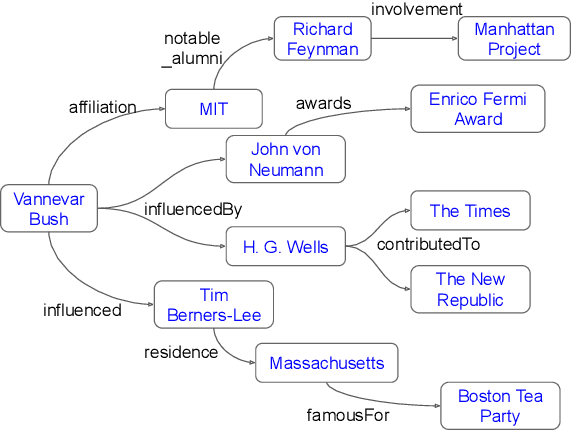

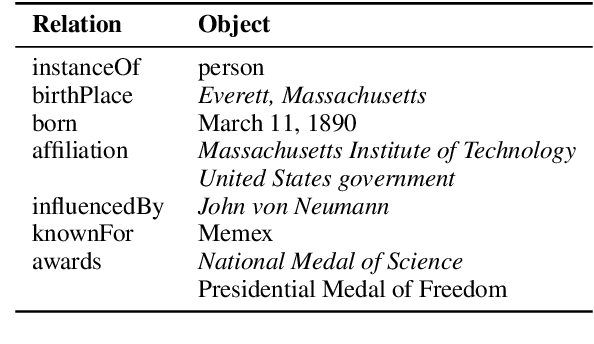

General-domain knowledge bases (KB), in particular the "big three" -- Wikidata, Yago and DBpedia -- are the backbone of many intelligent applications. While these three have seen steady development, comprehensive KB construction at large has seen few fresh attempts. In this work, we propose to build a large general-domain KB entirely from a large language model (LLM). We demonstrate the feasibility of large-scale KB construction from LLMs, while highlighting specific challenges arising around entity recognition, entity and property canonicalization, and taxonomy construction. As a prototype, we use GPT-4o-mini to construct GPTKB, which contains 105 million triples for more than 2.9 million entities, at a cost 100x less than previous KBC projects. Our work is a landmark for two fields: For NLP, for the first time, it provides \textit{constructive} insights into the knowledge (or beliefs) of LLMs. For the Semantic Web, it shows novel ways forward for the long-standing challenge of general-domain KB construction. GPTKB is accessible at https://gptkb.org.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge