GP-net: Grasp Proposal for Mobile Manipulators

Paper and Code

Sep 21, 2022

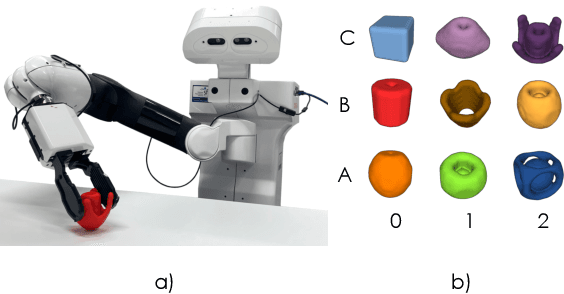

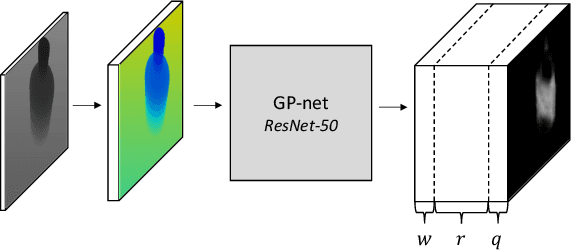

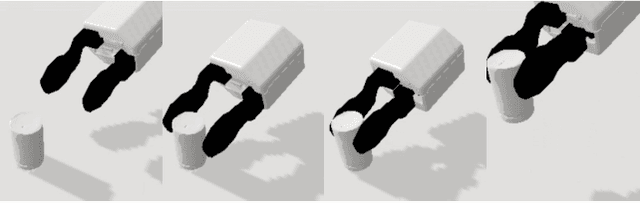

We present the Grasp Proposal Network (GP-net), a Convolutional Neural Network model which can generate 6-DOF grasps for mobile manipulators. To train GP-net, we synthetically generate a dataset containing depth-images and ground-truth grasp information for more than 1400 objects. In real-world experiments we use the EGAD! grasping benchmark to evaluate GP-net against two commonly used algorithms, the Volumetric Grasping Network (VGN) and the Grasp Pose Detection package (GPD), on a PAL TIAGo mobile manipulator. GP-net achieves grasp success rates of 82.2% compared to 57.8% for VGN and 63.3% with GPD. In contrast to the state-of-the-art methods in robotic grasping, GP-net can be used out-of-the-box for grasping objects with mobile manipulators without limiting the workspace, requiring table segmentation or needing a high-end GPU. To encourage the usage of GP-net, we provide a ROS package along with our code and pre-trained models at https://aucoroboticsmu.github.io/GP-net/.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge