Gi and Pal Scores: Deep Neural Network Generalization Statistics

Paper and Code

Apr 08, 2021

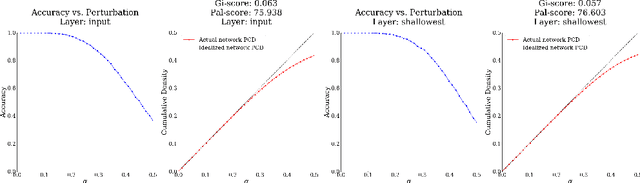

The field of Deep Learning is rich with empirical evidence of human-like performance on a variety of regression, classification, and control tasks. However, despite these successes, the field lacks strong theoretical error bounds and consistent measures of network generalization and learned invariances. In this work, we introduce two new measures, the Gi-score and Pal-score, that capture a deep neural network's generalization capabilities. Inspired by the Gini coefficient and Palma ratio, measures of income inequality, our statistics are robust measures of a network's invariance to perturbations that accurately predict generalization gaps, i.e., the difference between accuracy on training and test sets.

* Accepted to RobustML Workshop at ICLR 2021

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge