GFPNet: A Deep Network for Learning Shape Completion in Generic Fitted Primitives

Paper and Code

Jun 03, 2020

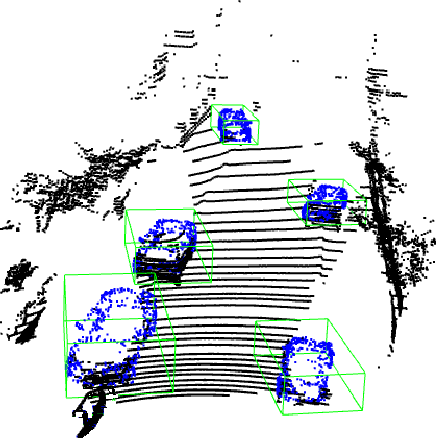

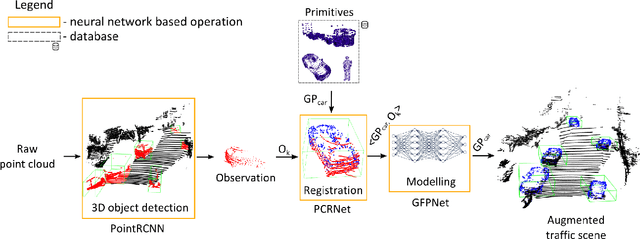

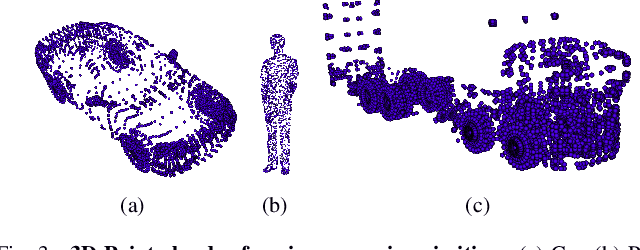

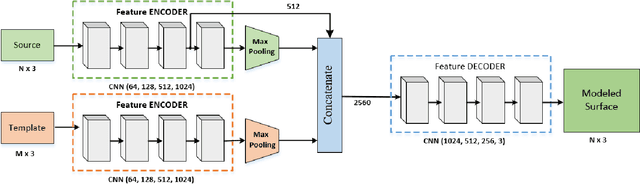

In this paper, we propose an object reconstruction apparatus that uses the so-called Generic Primitives (GP) to complete shapes. A GP is a 3D point cloud depicting a generalized shape of a class of objects. To reconstruct the objects in a scene we first fit a GP onto each occluded object to obtain an initial raw structure. Secondly, we use a model-based deformation technique to fold the surface of the GP over the occluded object. The deformation model is encoded within the layers of a Deep Neural Network (DNN), coined GFPNet. The objective of the network is to transfer the particularities of the object from the scene to the raw volume represented by the GP. We show that GFPNet competes with state of the art shape completion methods by providing performance results on the ModelNet and KITTI benchmarking datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge