Geometry-Inspired Top-k Adversarial Perturbations

Paper and Code

Jun 28, 2020

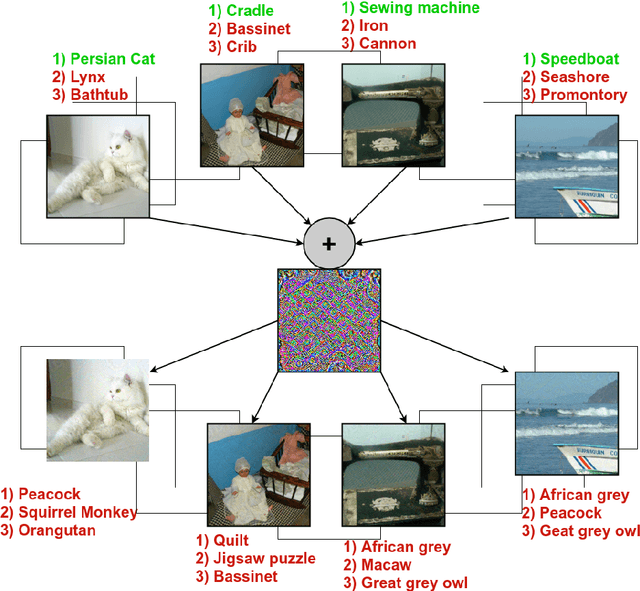

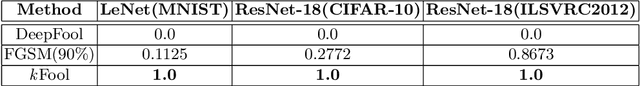

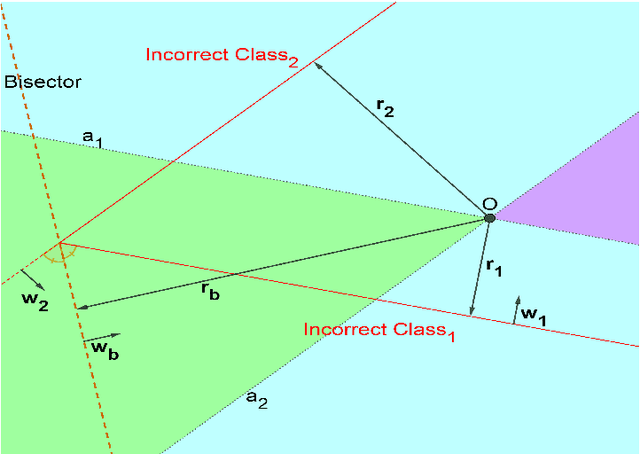

State-of-the-art deep learning models are untrustworthy due to their vulnerability to adversarial examples. Intriguingly, besides simple adversarial perturbations, there exist Universal Adversarial Perturbations (UAPs), which are input-agnostic perturbations that lead to misclassification of majority inputs. The main target of existing adversarial examples (including UAPs) is to change primarily the correct Top-1 predicted class by the incorrect one, which does not guarantee changing the Top-k prediction. However, in many real-world scenarios, dealing with digital data, Top-k predictions are more important. We propose an effective geometry-inspired method of computing Top-k adversarial examples for any k. We evaluate its effectiveness and efficiency by comparing it with other adversarial example crafting techniques. Based on this method, we propose Top-k Universal Adversarial Perturbations, image-agnostic tiny perturbations that cause true class to be absent among the Top-k pre-diction. We experimentally show that our approach outperforms baseline methods and even improves existing techniques of generating UAPs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge