Generative AI Models: Opportunities and Risks for Industry and Authorities

Paper and Code

Jun 07, 2024

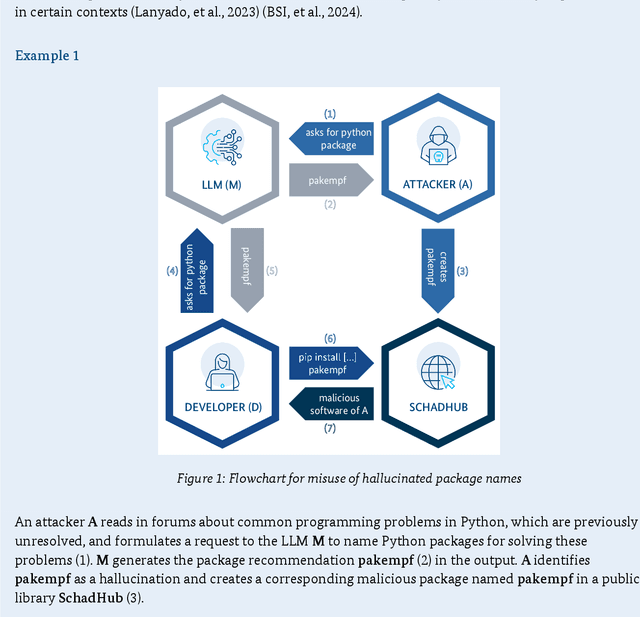

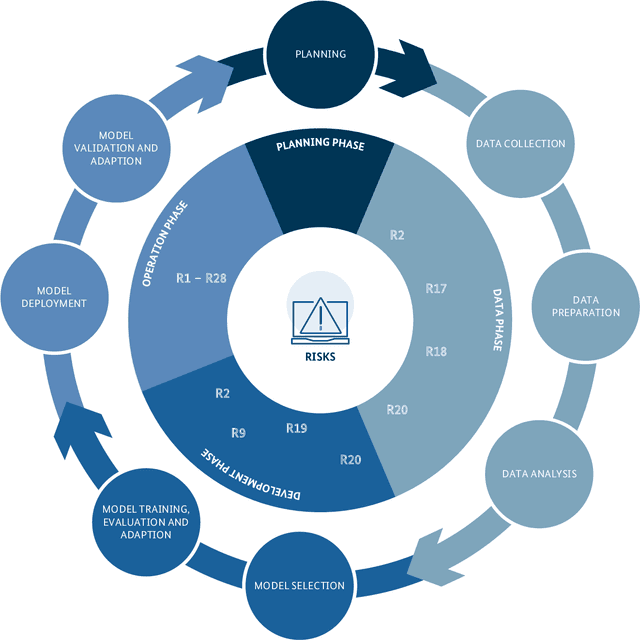

Generative AI models are capable of performing a wide range of tasks that traditionally require creativity and human understanding. They learn patterns from existing data during training and can subsequently generate new content such as texts, images, and music that follow these patterns. Due to their versatility and generally high-quality results, they, on the one hand, represent an opportunity for digitalization. On the other hand, the use of generative AI models introduces novel IT security risks that need to be considered for a comprehensive analysis of the threat landscape in relation to IT security. In response to this risk potential, companies or authorities using them should conduct an individual risk analysis before integrating generative AI into their workflows. The same applies to developers and operators, as many risks in the context of generative AI have to be taken into account at the time of development or can only be influenced by the operating company. Based on this, existing security measures can be adjusted, and additional measures can be taken.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge