Generating near-infrared facial expression datasets with dimensional affect labels

Paper and Code

Jun 28, 2022

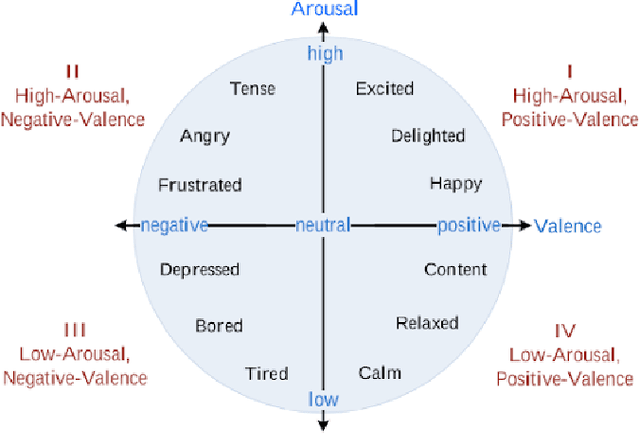

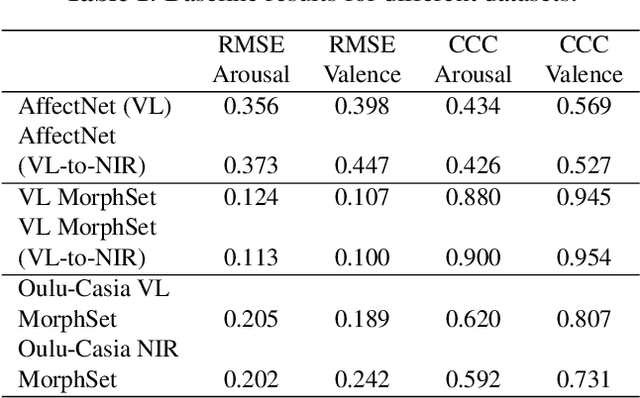

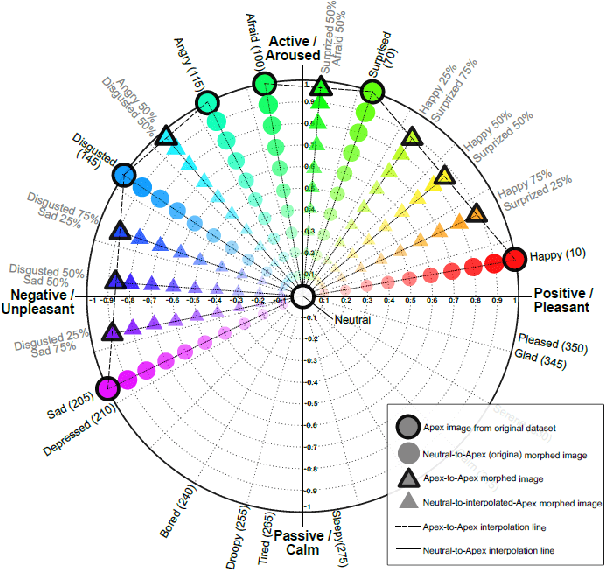

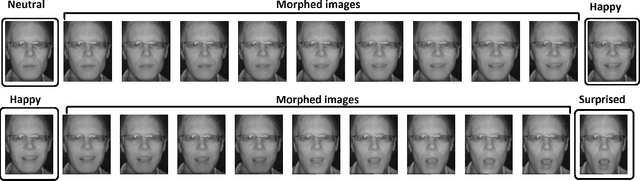

Facial expression analysis has long been an active research area of computer vision. Traditional methods mainly analyse images for prototypical discrete emotions; as a result, they do not provide an accurate depiction of the complex emotional states in humans. Furthermore, illumination variance remains a challenge for face analysis in the visible light spectrum. To address these issues, we propose using a dimensional model based on valence and arousal to represent a wider range of emotions, in combination with near infra-red (NIR) imagery, which is more robust to illumination changes. Since there are no existing NIR facial expression datasets with valence-arousal labels available, we present two complementary data augmentation methods (face morphing and CycleGAN approach) to create NIR image datasets with dimensional emotion labels from existing categorical and/or visible-light datasets. Our experiments show that these generated NIR datasets are comparable to existing datasets in terms of data quality and baseline prediction performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge