Gender-Inclusive Grammatical Error Correction through Augmentation

Paper and Code

Jun 12, 2023

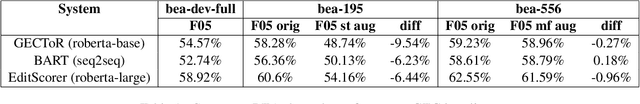

In this paper we show that GEC systems display gender bias related to the use of masculine and feminine terms and the gender-neutral singular "they". We develop parallel datasets of texts with masculine and feminine terms and singular "they" and use them to quantify gender bias in three competitive GEC systems. We contribute a novel data augmentation technique for singular "they" leveraging linguistic insights about its distribution relative to plural "they". We demonstrate that both this data augmentation technique and a refinement of a similar augmentation technique for masculine and feminine terms can generate training data that reduces bias in GEC systems, especially with respect to singular "they" while maintaining the same level of quality.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge