GainAdaptor: Learning Quadrupedal Locomotion with Dual Actors for Adaptable and Energy-Efficient Walking on Various Terrains

Paper and Code

Dec 12, 2024

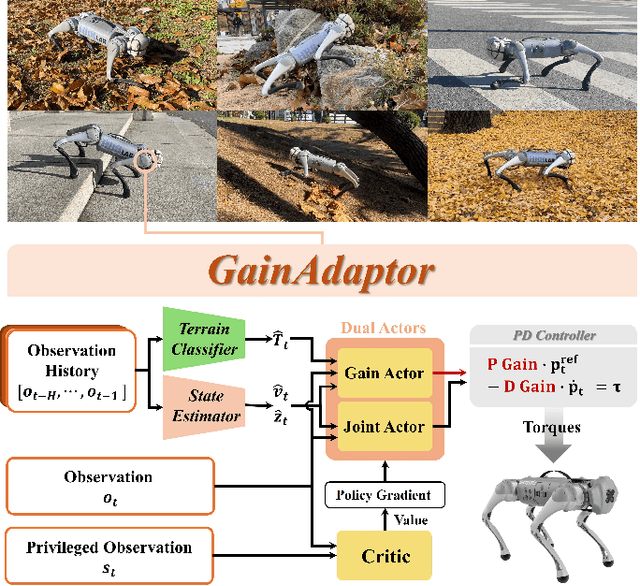

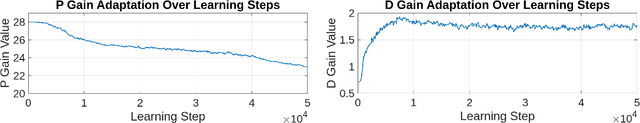

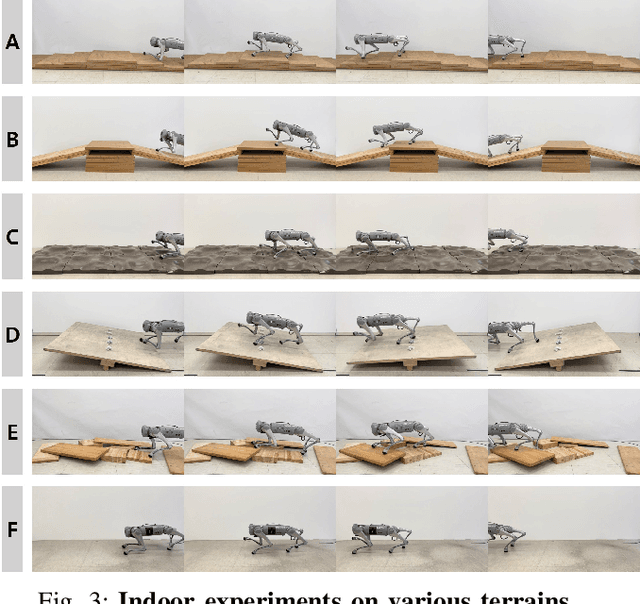

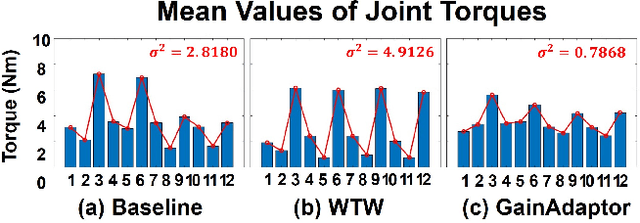

Deep reinforcement learning (DRL) has emerged as an innovative solution for controlling legged robots in challenging environments using minimalist architectures. Traditional control methods for legged robots, such as inverse dynamics, either directly manage joint torques or use proportional-derivative (PD) controllers to regulate joint positions at a higher level. In case of DRL, direct torque control presents significant challenges, leading to a preference for joint position control. However, this approach necessitates careful adjustment of joint PD gains, which can limit both adaptability and efficiency. In this paper, we propose GainAdaptor, an adaptive gain control framework that autonomously tunes joint PD gains to enhance terrain adaptability and energy efficiency. The framework employs a dual-actor algorithm to dynamically adjust the PD gains based on varying ground conditions. By utilizing a divided action space, GainAdaptor efficiently learns stable and energy-efficient locomotion. We validate the effectiveness of the proposed method through experiments conducted on a Unitree Go1 robot, demonstrating improved locomotion performance across diverse terrains.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge